Overview

Ilum is a modular, open data lakehouse platform for Kubernetes and Apache Hadoop Yarn clusters. This page provides a technical overview of the platform's architecture, execution engines, catalogs, and integration ecosystem.

Platform Architecture

Core Components

Ilum is composed of three platform services that work together with a layered set of engines, catalogs, and data stores:

ilum-ui: React-based web frontend. Hosts the SQL Editor, Table Explorer, Lineage view, Workloads management, security administration, and the Modules registry.ilum-core: The main backend service (Spring Boot WebFlux). Hosts the public REST API (/api/v1/...), job and cluster orchestration, multi-engine SQL execution, security, lineage capture, and gRPC/Kafka communication with running jobs.ilum-api: A dedicated microservice for module management. Drives Helm-based install, upgrade, and disable of optional Ilum modules (Airflow, Jupyter, Trino, Superset, etc.) at runtime via cluster-scoped RBAC. Future releases will extendilum-apiwith Model Context Protocol (MCP) capabilities and open APIs for third-party extension.

The platform supports both Python (PySpark) and Scala programming languages for batch and interactive workloads, plus first-class SQL across every supported engine.

Execution Engines

Ilum exposes execution as a multi-engine surface fronted by the Apache Kyuubi SQL gateway. Each engine has a clear sweet spot:

- Apache Spark: Large-scale ETL, machine learning pipelines, and any workload that benefits from distributed processing across many executors.

- Trino: Interactive analytics across federated data sources, with fast response times on medium-to-large datasets.

- DuckDB: Single-node analytics on small-to-medium data, ideal for ad-hoc exploration and DuckLake-managed tables.

- Apache Flink: Low-latency stream processing.

The automatic engine router selects the appropriate engine based on data size, workload type, and locality. Manual override is available for every query and job through the Engine Selector in the SQL Editor and the engine field on the API.

Learn more: Execution Engines, Apache Kyuubi SQL Gateway, SQL Editor

Open Lakehouse and Catalogs

Ilum is a true open lakehouse: tables defined once are usable from every engine, addressed through any of four catalog backends.

Table formats (ACID, schema evolution, time travel):

- Delta Lake: ACID transactions, time travel, schema evolution.

- Apache Iceberg: Partition evolution, hidden partitioning, large-scale analytics.

- Apache Hudi: Record-level upserts, incremental processing.

Catalog backends (selectable per workload):

- Hive Metastore (default-on): Centralized metadata compatible with every engine.

- Project Nessie: Git-style branching and version control for Iceberg tables.

- Unity Catalog: Databricks-compatible governance and access control.

- DuckLake (default-on): DuckDB-native catalog for local-first analytics.

The Ilum Tables abstraction lets you read and write Delta, Iceberg, and Hudi from Spark using identical code.

Learn more: Catalogs, Ilum Tables, Table Explorer

Data Layer

Ilum maintains its own metadata and persistence on either of two supported backends:

- PostgreSQL (primary, recommended): Reactive access via R2DBC; jOOQ-generated SQL DSL. Default for new installations.

- MongoDB (legacy, still supported): Available for existing deployments. Migration tooling ships with the platform (M001 through M009 migration scripts).

Object storage is handled through pluggable connectors: RustFS (bundled default), MinIO (bundled, opt-in), S3 (AWS and any S3-compatible service), GCS, Azure Blob (WASBS), and HDFS. For provider selection and the migration playbook, refer to Object Storage in Ilum.

Learn more: Migration: PostgreSQL backend, Cluster Storages

Communication Layer

Ilum supports two communication patterns between running jobs and the platform service:

gRPC (default):

- Direct connections between

ilum-coreand Spark, Trino, or Flink jobs. - No message broker required.

- Recommended for single-instance deployments and development.

Apache Kafka (recommended for production HA):

- Enables High Availability and horizontal scaling of

ilum-core. - All event exchanges occur via automatically created topics.

- Supports distributed

ilum-coreinstances behind a load balancer.

Modules and Extensibility

Ilum ships as a small core with a curated set of optional modules. Each module is a Helm sub-chart, and the ilum-api microservice drives install, upgrade, and disable operations at runtime without redeploying the platform.

The frontend Modules registry (ilum-modules.json) groups modules into categories: engines, storage, workflows, AI, governance, visualization, notebooks, observability, security. Operators toggle modules from the UI, and ilum-api issues the corresponding Helm operation.

The roadmap extends ilum-api with MCP support and open APIs, opening the module system to third-party extension and AI-driven module orchestration.

Learn more: Modules administration

Supported Cluster Types

Kubernetes Clusters: Native CRD-based Spark application deployment with pod lifecycle management. Supports GKE, EKS, AKS, and on-premise deployments with multi-cluster management from a central control plane.

Yarn Clusters: Apache Hadoop Yarn integration for hybrid architectures, configured using Yarn configuration files.

Local Clusters: Runs Spark applications where ilum-core is deployed, suitable for development and testing.

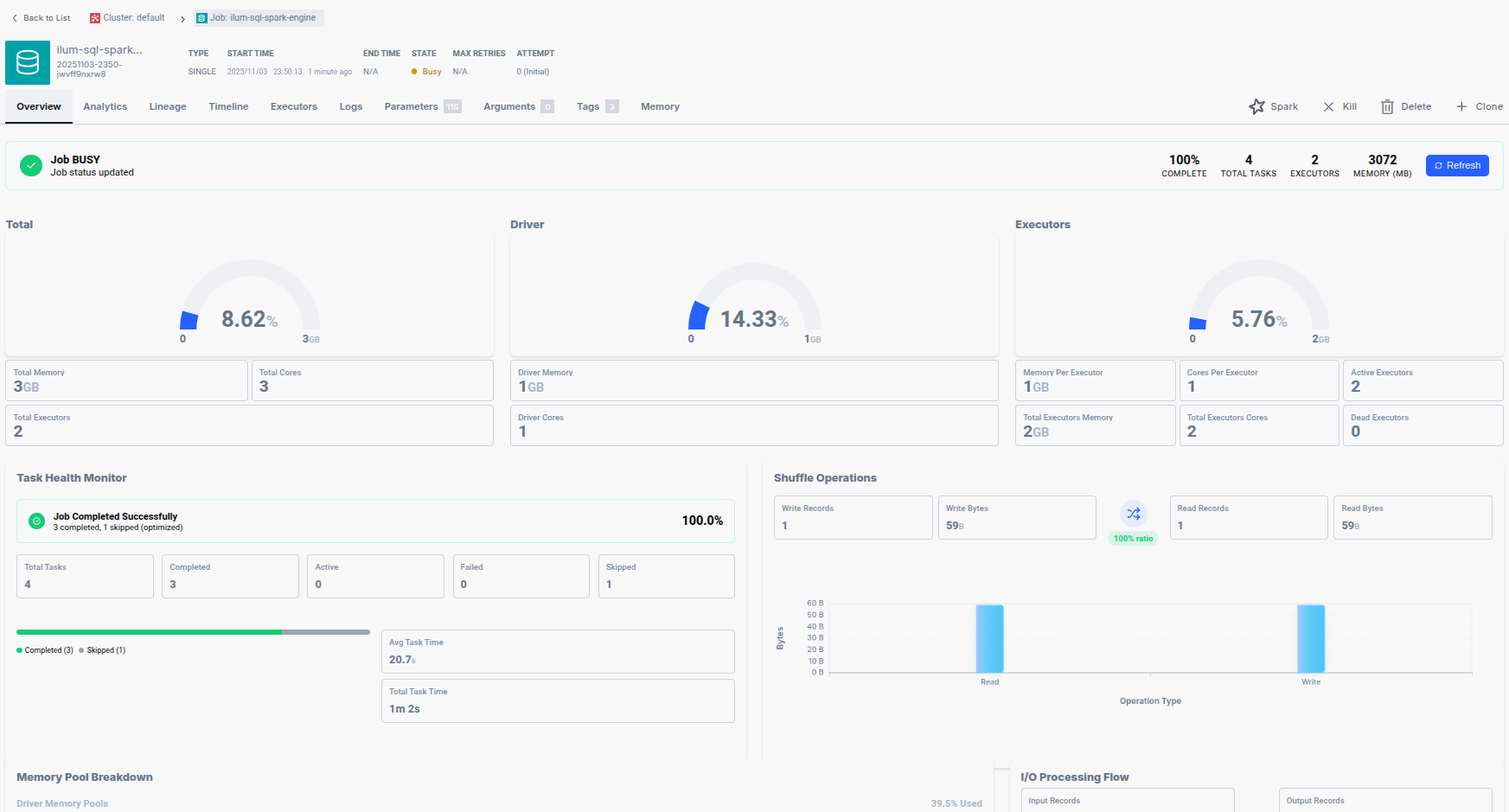

Workloads

Ilum manages five workload types as first-class concepts:

- Clusters: Compute targets (Kubernetes, Yarn, Local).

- Jobs: One-shot batch executions.

- Services: Long-running interactive sessions that execute code on demand without per-call initialization.

- Schedules: Cron-driven recurring executions.

- Requests: Ad-hoc query and batch submissions.

Every workload exposes Status, Logs, Metrics, and Description tabs, with URL-persisted filters and bulk actions in the UI.

Spark Job Types

Batch Jobs: Traditional Spark applications submitted for one-time execution via:

- The Ilum UI with visual job configuration.

- The REST API for programmatic submission.

spark-submitintegration for existing workflows.

Interactive Jobs (Ilum Groups / Services): Long-running Spark sessions that execute code immediately without initialization overhead. Multiple users can share the same Spark context by pointing to the same job ID.

Code Groups: Web-based code execution environment allowing direct Spark code writing and execution from the Ilum UI.

Key benefits:

- Effortless job management: create, delete, clone, stop, and resume with single-click operations.

- Comprehensive monitoring: track logs, CPU/memory usage, stages, and task structures.

- Session reusability: avoid repeated Spark session creation.

- Automated configuration: seamless integration with all enabled tools.

- Orchestration support: schedule-based execution and REST API for advanced workflows.

Learn more: Get Started, Run Spark Job, Run Interactive Job, Run Code Group

REST API

Ilum exposes its full surface area through an OpenAPI 3.0 specification:

# Submit a batch job

POST /api/v1/job

# Open or interact with an interactive group

POST /api/v1/group

# Execute a SQL query against any registered engine

POST /api/v1/sql/execute

# Schedule a recurring job

POST /api/v1/schedule

Every UI action is backed by a documented REST endpoint, which makes Ilum straightforward to drive from CI pipelines, custom orchestrators, or downstream services. Use cases include:

- Triggering Spark transformations from API gateways.

- Running on-demand Trino or DuckDB queries from BI tools.

- Submitting and monitoring streaming Flink jobs from external workflows.

- Driving Jupyter notebook kernels through HTTP.

Documentation: API Reference, API Playground

Job Orchestration

Built-in Scheduler: Create cron-based schedules for launching applications directly from the UI. See Schedule documentation.

External Orchestration: Integrate with enterprise workflow tools:

- Apache Airflow: DAG-based workflow orchestration with Spark operators.

- Kestra: Event-driven pipelines with Spark task execution.

- Mage: Data pipeline creation with visual interface.

- n8n: Fair-code workflow automation with native AI capabilities.

- Apache NiFi: Data flow automation and management.

Multi-Cluster Management

Centralized Control Plane

Manage heterogeneous clusters from a single interface:

Supported Environments:

- Cloud Kubernetes: GKE, EKS, AKS with auto-scaling.

- On-premise: Bare metal Kubernetes or Hadoop Yarn.

- Hybrid: Mixed cloud and on-premise for data sovereignty.

Capabilities:

- Single-time certificate setup replaces complex kubeconfig management.

- UI-driven job deployment eliminates

kubectlandspark-submitcommands. - Independent resource quotas (Kubernetes

ResourceQuota) andLimitRangeper cluster. - Centralized monitoring and job scheduling.

Benefits over traditional approaches:

- No distributed kubeconfig files or certificate management.

- Simplified access control (grant cluster access once).

- Automatic certificate updates through Ilum.

- Visual job management without console commands.

Learn more: Clusters and Storages, Create Local Cluster, Create Kubernetes Cluster

Centralized Storage Management

Configure storage solutions once in Ilum, and all jobs automatically receive authentication:

Supported Storage Types: S3, GCS (Google Cloud Storage), WASBS (Azure Blob Storage), HDFS.

Benefits:

- Unified access across all storage systems from a single interface.

- Automatic Spark parameter configuration for each storage.

- Eliminate per-job storage configuration.

- Multi-region storage support for latency reduction.

Example: the bundled object storage automatically configures the following Spark parameters for every job. The endpoint is the provider-neutral ilum-objectstorage alias, which routes to whichever provider is active (RustFS by default, MinIO when opted-in). See Object Storage in Ilum.

spark.hadoop.fs.s3a.endpoint=http://ilum-objectstorage:9000

spark.hadoop.fs.s3a.access.key=<read from ilum-objectstorage-credentials Secret>

spark.hadoop.fs.s3a.secret.key=<read from ilum-objectstorage-credentials Secret>

spark.hadoop.fs.s3a.path.style.access=true

Learn more: Create Storage, File Explorer

Data Exploration and Visualization

SQL Editor

The Ilum SQL Editor (formerly SQL Viewer) is a multi-engine query workbench:

- Engine Selector: Switch between Spark, Trino, DuckDB, and Flink per query.

- Engine lifecycle: Start, stop, and restart engines from the UI; live status indicators.

- Dialect transpilation: Translate queries between Spark SQL, Trino SQL, DuckDB SQL, and Flink SQL via the built-in transpiler.

- In-app SQL notebooks: Persistent multi-cell notebooks with per-cell execution.

- Saved queries: Folder-organized library with bulk operations and move support.

- Results tabs: Data, Logs, Statistics, Plan, Export, Visualization.

- Column profiling: Histogram, null counts, cardinality.

Learn more: SQL Editor

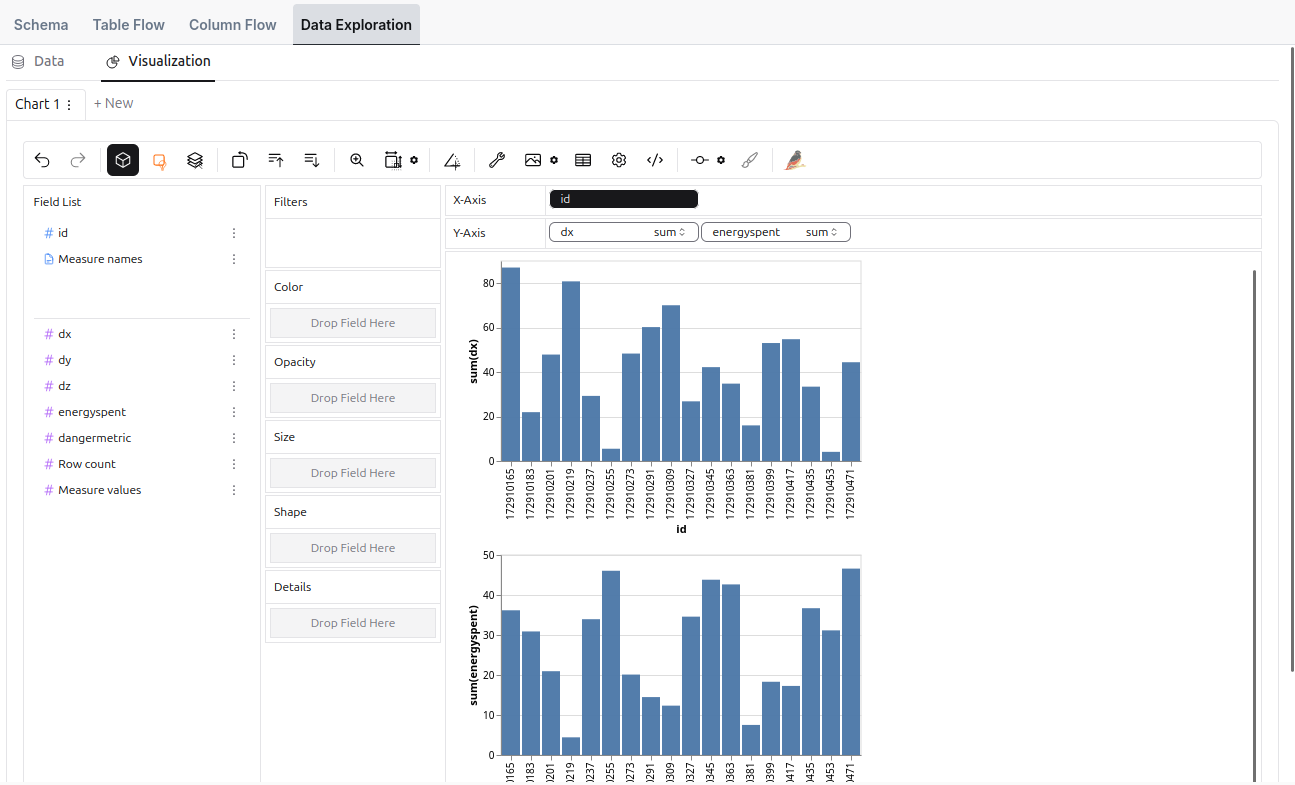

Table Explorer

Advanced data exploration tool providing:

- Visual data sampling and exploration.

- Chart building with mathematical functions.

- In-depth analysis with aggregations, filtering, and transformations.

- Browse Hive Metastore, Nessie (with branch switching), Unity Catalog, and DuckLake tables.

- Edit table descriptions inline.

- Configurable preview limit and auto-refresh.

Learn more: Table Explorer

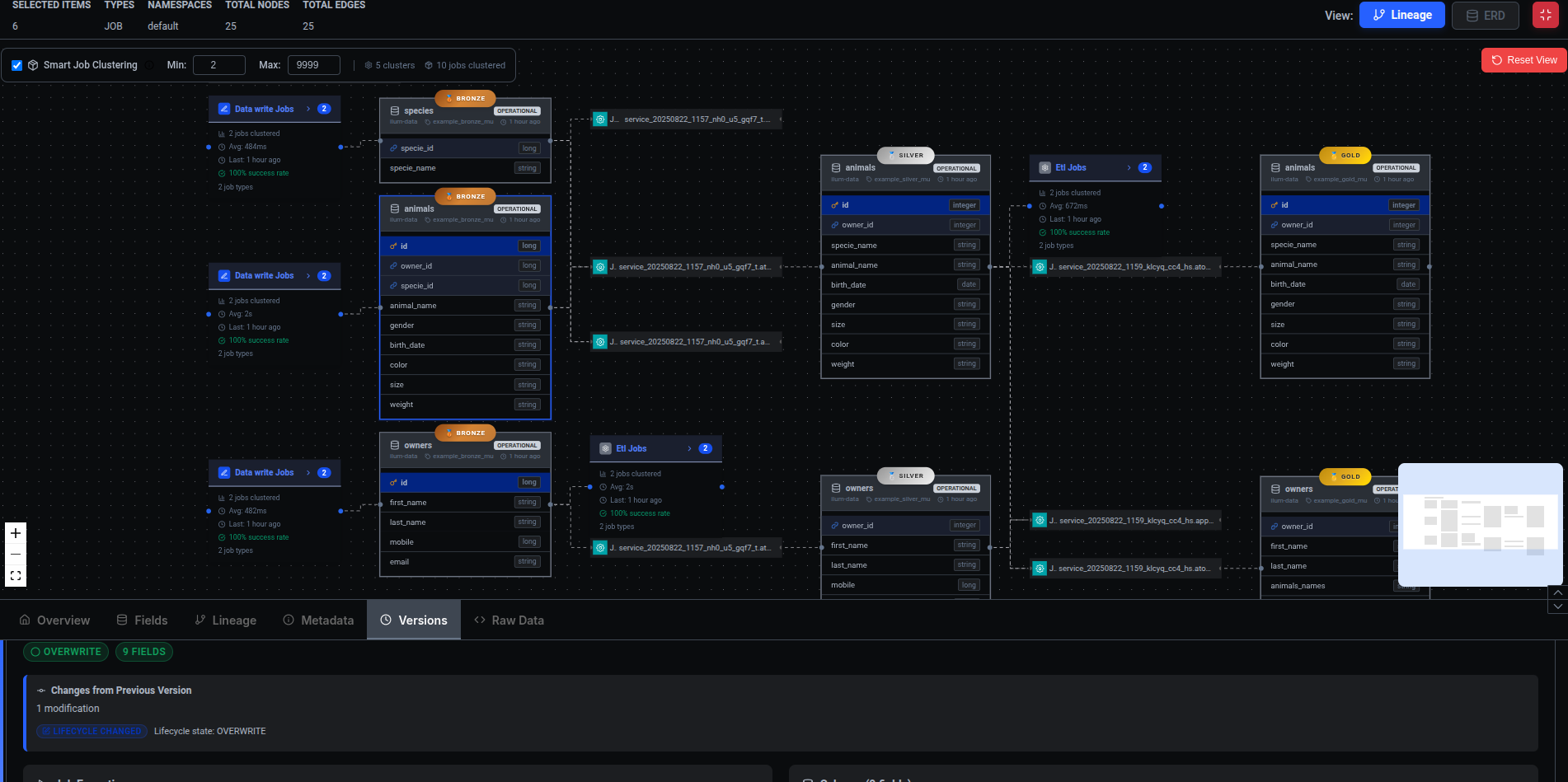

Data Lineage

Visualize data flows and transformations across the entire platform:

Capabilities:

- Job and dataset relationship visualization (React Flow DAG).

- Column-level lineage tracking.

- Automatic capture via OpenLineage integration.

- ERD ↔ lineage toggle for schema and runtime perspectives.

- Search, clustering, and graph-settings controls.

- Open-in-lineage from the Table Explorer.

Implementation: Ilum integrates Marquez with OpenLineage listeners automatically configured for every job. Provides comprehensive visibility into data movement and enables rapid issue tracing.

Learn more: Data Lineage

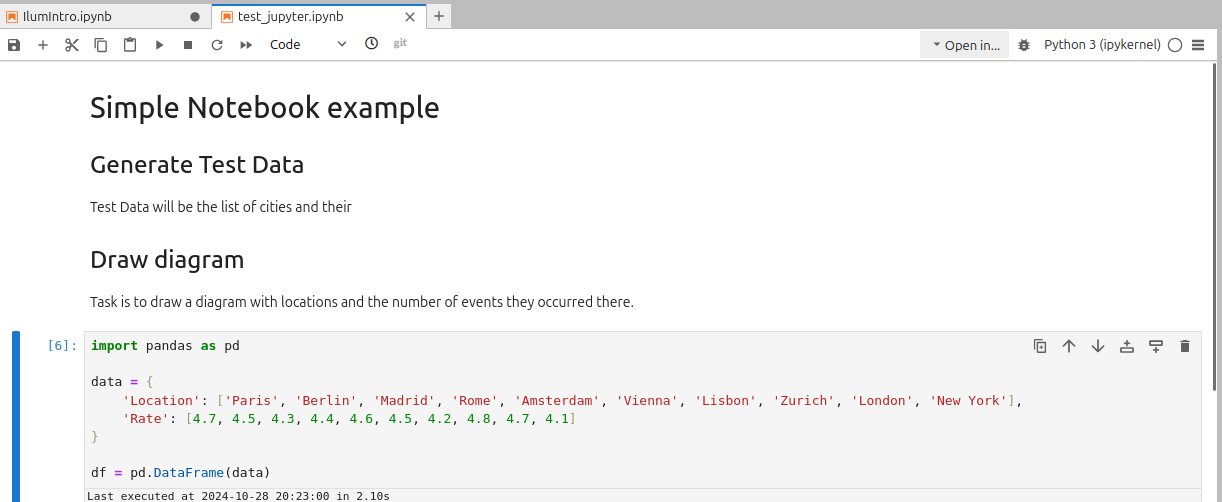

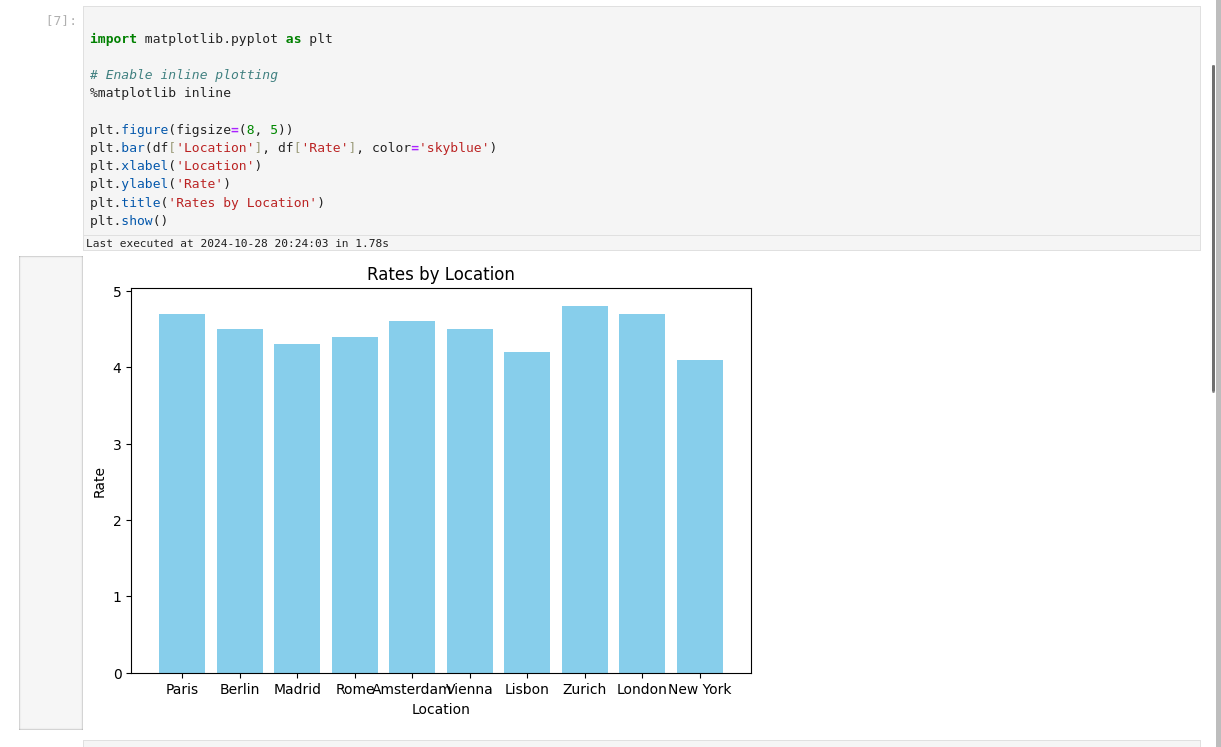

Development Environments

Notebooks

Ilum integrates production-ready notebook environments:

JupyterLab (default-on): Modern web-based IDE for single-user workflows with Git integration and Spark Magic.

JupyterHub (Enterprise): Multi-user orchestrator providing:

- Enterprise authentication (LDAP/SSO via Ilum).

- User isolation with per-user JupyterLab workspaces.

- Centralized resource management on Kubernetes.

- Built-in version control via Gitea.

Apache Zeppelin: Multi-language notebook emphasizing Spark analytics with flexible visualization.

In-app SQL notebooks: Multi-engine notebook cells inside the Ilum SQL Editor, persisted in the platform metadata store.

All environments connect to Ilum via ilum-livy-proxy (legacy) or directly through the multi-engine SQL surface, binding sessions to Ilum Groups and enabling interactive execution without manual configuration.

Learn more: Notebooks, Notebook Usage

Spark Connect

Spark Connect provides a client-server architecture allowing remote Spark job execution:

Benefits:

- Client-server isolation prevents crashes.

- Independent version upgrades.

- Secure remote cluster access.

- IDE and notebook connectivity without a full Spark installation.

- Kubernetes-aware proxy for accessing Spark Connect endpoints across cluster boundaries.

Ilum deploys Spark Connect servers as standard jobs, accessible through pod names, IPs, or Kubernetes services.

Learn more: Spark Connect

MLOps and Data Science

Data Science Platform

Ilum provides an end-to-end platform streamlining the entire ML lifecycle:

Pre-configured Environments:

- Direct Spark, Trino, and DuckDB connectivity.

- Catalog access to Delta, Iceberg, and Hudi tables across Hive, Nessie, Unity, and DuckLake.

- Comprehensive ML libraries (scikit-learn, XGBoost, PyTorch, TensorFlow).

- Starter templates for common ML scenarios.

Model Development:

- Integrated experiment tracking via MLflow.

- Feature engineering pipelines with Spark ML.

- Automated training and inference pipelines.

- Version control and collaborative development.

Production Deployment:

- Model registry with lifecycle management.

- Auto-scaling endpoints with monitoring.

- A/B testing support.

- Scheduled retraining jobs.

Learn more: Data Science Platform

MLflow Integration

Complete ML lifecycle management:

- Experiment tracking with parameters, metrics, and artifacts.

- Model registry with version control.

- Lifecycle stage transitions (development to staging to production).

- Direct integration with Ilum deployment pipelines.

Configuration: Enable with --set mlflow.enabled=true in the Helm deployment.

Learn more: MLflow

LangFuse

LangFuse provides LLM observability for AI-driven workloads. Trace prompts, completions, and agent steps alongside the data pipelines that produced their inputs. Enabled as an optional module via ilum-api.

AI Data Analyst

The AI Data Analyst provides assistant tooling for SQL exploration. Capabilities are evolving; refer to the dedicated page for the current shipping surface and the roadmap.

Learn more: AI Data Analyst

Business Intelligence Integration

Apache Superset

Open-source data visualization platform included in Ilum:

- Pre-configured connections to Ilum SQL via Kyuubi.

- Interactive dashboards and reports.

- Multiple chart types and customization.

- Free alternative to Tableau and PowerBI.

Configuration: Enable with --set superset.enabled=true. Learn more: Superset

Streamlit

Streamlit apps deployed alongside Ilum for lightweight Python-based analytics, ML demos, and internal tools. Enabled as an optional module via ilum-api.

Tableau and PowerBI

External BI tool connectivity via JDBC:

- Kyuubi JDBC driver for Ilum SQL connectivity.

- Direct access to all catalog tables (Hive, Nessie, Unity, DuckLake).

- Load balancer exposure for external access.

- Automatic Spark configuration.

Learn more: Tableau Integration

Monitoring and Observability

Centralized Monitoring

Spark History Server: Comprehensive job monitoring accessible from the Ilum UI:

- Job timeline and stage metrics.

- Executor resource utilization.

- CPU and memory usage tracking.

- Task-level performance details.

Event logs are stored on the default Ilum storage and accessible across multi-cluster deployments.

Metrics Collection:

- Prometheus + Grafana: Kube Prometheus stack with pre-configured dashboards (optional, default-off).

- Graphite: Push-based metrics ideal for multi-cluster environments.

- All Ilum jobs are automatically configured to push metrics.

Log Aggregation:

- Loki + Promtail: Centralized log gathering and querying (optional, default-off).

- Efficient log management across the entire infrastructure.

- Query capabilities suited to distributed environments.

Lineage events: Every job emits OpenLineage events captured by Marquez (default-on).

Learn more: Monitoring, Clusters and Storages

Security

Authentication and Authorization

Built-in RBAC: Role-based access control with user and group management through the Ilum UI, enforced by the RequiresPermission framework on every endpoint.

LDAP/Active Directory: Enterprise directory service integration for centralized user management. Learn more: LDAP

OAuth2/OIDC: Integration with external identity providers:

- Keycloak, Okta, Azure AD, Google, GitLab.

- PKCE flow support for secure public clients.

- Automatic user provisioning from JWT tokens.

Learn more: OAuth2 Security

Identity Provider Mode: Deploy Ilum as an OAuth2 provider (via embedded Ory Hydra) for the embedded tools it manages: Airflow, Superset, Grafana, Gitea, MinIO, and others.

Learn more: OAuth Provider

API Tokens: Long-lived credentials for programmatic access, scoped by RBAC permission set.

RBAC Security Modes

Unrestricted Mode (default): Cluster-wide permissions for simplified deployment in development environments.

Restricted Mode: Namespace-scoped permissions implementing the principle of least privilege:

- Enhanced security for production.

- Namespace isolation and reduced attack surface.

- Compliance-ready configuration.

- Limited to deployment namespace only.

Learn more: RBAC Security Modes

Network Security

- TLS/mTLS for inter-service communication.

- Kubernetes Network Policies for pod-to-pod restrictions.

- Certificate-based encryption.

- Egress controls.

Learn more: Internal Security, Data Access

Production Deployment

Deployment Architecture

Ilum follows a modular architecture separating core platform services from optional data, analytics, and integration features:

Base Installation (suitable for development and small clusters):

ilum-coreandilum-ui.- Spark 4.x execution.

- DuckDB for local-first analytics.

- MinIO object storage.

- PostgreSQL metadata store.

- Marquez lineage (default-on).

- Jupyter notebooks (default-on).

- Hive Metastore (default-on).

Optional Modules (enabled per deployment via ilum-api):

- Engines: Trino, Apache Flink.

- Catalogs: Project Nessie, Unity Catalog.

- Notebooks: JupyterHub (Enterprise), Apache Zeppelin.

- Orchestration: Airflow, Kestra, Mage, n8n, NiFi.

- BI: Superset, Streamlit.

- AI/ML: MLflow, LangFuse.

- Observability: Kube Prometheus stack, Loki, Promtail.

- Identity: Hydra (Ilum as IdP), OpenLDAP.

- Comms: Kafka (recommended for HA).

- Storage: ClickHouse (analytics store).

Namespace Separation Strategy (recommended for production):

- Separate namespaces for PostgreSQL, MongoDB, Kafka, MinIO.

- Enhanced security through namespace-level isolation.

- Independent resource quotas and scaling.

- Simplified maintenance and upgrades.

Helm-based Installation

# Add the Ilum repository

helm repo add ilum https://charts.ilum.cloud

helm repo update

# Recommended data-platform deployment

helm install ilum ilum/ilum \

--set ilum-hive-metastore.enabled=true \

--set ilum-core.metastore.enabled=true \

--set ilum-core.metastore.type=hive \

--set ilum-sql.enabled=true \

--set ilum-core.sql.enabled=true \

--set global.lineage.enabled=true

# Minimal deployment (core only)

helm install ilum ilum/ilum

Configuration Management: All components are configurable via Helm values. Use the module selector for custom integration stacks.

Learn more: Production Deployment, Get Started

High Availability

ilum-core is designed for stateless operation:

- Automatic state recovery after crashes.

- Horizontal scaling based on load.

- HA support via Kafka communication.

- PostgreSQL, MongoDB, Kafka, and MinIO support HA deployments.

Learn more: Architecture

Use Cases

Ilum addresses diverse big data scenarios across industries:

Hadoop and Cloudera Migration: Simplify the transition from Hadoop and Cloudera CDP estates to Kubernetes with Yarn compatibility, Hive Metastore reuse, and incremental migration support. For large estates, Ilum Enterprise includes Bifrost, a dedicated migration automation tool that handles discovery, phased execution, data validation, and rollback end to end.

Multi-Engine Analytics: Use Trino and DuckDB for fast interactive analytics on the same lakehouse tables that Spark uses for heavy ETL. The automatic engine router places each query on the engine that handles it most efficiently.

Real-Time ML Interaction: Deploy ML models on Spark clusters with REST API for real-time predictions (for example, e-commerce personalization).

Automated Machine Learning: Programmatically submit training, testing, and refinement jobs with interactive Jupyter integration and MLflow tracking.

Fraud Detection: Real-time transaction processing with ML algorithms accessible via REST API for immediate alerts.

Network Optimization: Predict network outages through ML models for proactive maintenance in telecommunications.

Learn more: Use Cases, Transaction Use Case

Additional Resources

- API Documentation: API Reference for REST endpoints and programmatic access.

- Configuration Guides: Ilum Core, Ilum UI, All-in-One.

- Upgrade Information: Upgrade Notes, Migration Guide.

- Support: Help and Support.

Next Steps

- Installation: Follow the Get Started guide for initial deployment.

- First Job: Submit your first Spark job.

- Learning: Take the official Ilum course.

- Production: Review production deployment best practices.