Get Started

This guide covers deploying Ilum on Kubernetes and submitting your first Spark job.

Installation Architecture

Ilum follows a modular architecture where core platform services are separated from optional engines, catalogs, and integrations. The base installation provides:

ilum-core: Main backend (REST API, jobs, multi-engine SQL, lineage, security)ilum-ui: Web frontend (SQL Editor, Table Explorer, Lineage, Workloads)ilum-api: Module-management microservice that installs, upgrades, and disables optional modules at runtime via Helm- Apache Spark 4.x job orchestration on Kubernetes

- DuckDB for local-first SQL analytics, with the DuckLake catalog enabled by default

- Hive Metastore for centralized table metadata

- PostgreSQL as the primary metadata store (MongoDB remains supported for legacy deployments)

- RustFS (default) or MinIO (opt-in) S3-compatible object storage. See Object Storage in Ilum.

- Apache Kyuubi SQL gateway for multi-engine query routing

- Marquez for OpenLineage-based data lineage (default-on)

- Jupyter notebook integration

- REST API for programmatic access

Optional modules enable additional engines, catalogs, and integrations:

- Engines: Trino, Apache Flink

- Catalogs: Project Nessie, Unity Catalog

- Notebooks: JupyterHub (Enterprise), Apache Zeppelin

- Orchestration: Apache Airflow, Kestra, Mage, n8n, Apache NiFi

- BI and visualization: Apache Superset, Streamlit

- AI and ML: MLflow, LangFuse

- Observability: Kube Prometheus stack, Loki, Promtail

- Identity: Ory Hydra (Ilum as IdP), OpenLDAP

Resource Planning:

- Base deployment: 8-12 GB RAM, 6 CPU cores

- With Hive Metastore, Marquez lineage, Kyuubi, and PostgreSQL: 18 GB RAM, 12 CPU cores

- Production workloads: Size based on concurrent executor requirements across all enabled engines

Module selection impacts pod count, storage IOPS, and network traffic. Each module runs in dedicated pods with configurable resource limits.

Prerequisites

In order to run Ilum on your machine, you'll need the following:

Kubernetes Cluster

Ilum deploys exclusively on Kubernetes using Helm charts. Any CNCF-compliant Kubernetes distribution works:

Supported Platforms:

- Local development: Minikube, Microk8s, K3s, Docker Desktop

- Cloud-managed: GKE, EKS, AKS, DigitalOcean Kubernetes

- Self-hosted: K8s on bare metal, OpenShift, Rancher

Architecture Support:

- Multi-arch container images (amd64, arm64)

- Tested on Linux, macOS (M1/M2), Windows WSL2

Quick Local Setup: For development/testing without an existing cluster, use Minikube (installation guide) or Microk8s (installation guide).

This guide uses Minikube for examples. Verify installation with:

minikube version

Issues with Minikube on Windows OS

If you are using Windows, you may encounter issues with Minikube related to the driver.

On Windows, Minikube can choose from a variety of drivers (hosts for the Kubernetes cluster), however generally you want to use either Hyper-V or Docker. If you have Docker installed, you should either use Minikube with the Docker driver or enable built-in Kubernetes support in Docker Desktop.

If you do not have Docker available, you should use the Hyper-V driver. To do this, you can consult this guide. Keep in mind that you will need to give Minikube administrator privileges to interface with Hyper-V.

kubectl (Logs & Troubleshooting)

Install kubectl to inspect Ilum resources and stream logs.

Install

-

macOS:

brew install kubectl -

Windows:

winget install -e --id Kubernetes.kubectl(or)choco install kubernetes-cli -

Linux:

curl -LO "https://dl.k8s.io/release/$(curl -Ls https://dl.k8s.io/release/stable.txt)/bin/linux/amd64/kubectl"

sudo install -m 0755 kubectl /usr/local/bin/kubectl

Quick use

kubectl get pods -n <ns>

kubectl logs -n <ns> <pod> --all-containers -f

kubectl describe pod -n <ns> <pod>

kubectl get events -n <ns> --sort-by=.lastTimestamp

Helm

Helm is a package manager for Kubernetes that allows you to define, install, and upgrade Kubernetes applications. If you haven't installed Helm yet, you can find instructions here.

Cluster Resource Allocation

Minikube resource allocation determines available capacity for Spark executors and Ilum services.

Configuration Options:

For full module testing (metadata, lineage, SQL):

minikube start --cpus 12 --memory 18192 --addons metrics-server

For minimal Spark workloads:

minikube start --cpus 6 --memory 12288 --addons metrics-server

The metrics-server addon exposes pod-level CPU/memory metrics to the Ilum UI dashboard.

Minikube Limitations:

- Single-node cluster (no distributed executor scheduling)

- Suitable for functional testing, not performance benchmarks

For production deployments, see Production Setup.

For a streamlined installation experience, you can use the Ilum CLI instead of manual Helm commands. The CLI wraps Helm and kubectl, providing guided setup, module management, and one-command deployment with ilum quickstart. See the CLI Getting Started guide.

Helm Deployment

Add the Ilum chart repository:

helm repo add ilum https://charts.ilum.cloud

helm repo update

Option 1: Data Platform (Recommended)

Includes Hive Metastore for table metadata, the multi-engine SQL gateway (Kyuubi), and OpenLineage data tracking.

helm install ilum ilum/ilum \

--set ilum-hive-metastore.enabled=true \

--set ilum-core.metastore.enabled=true \

--set ilum-core.metastore.type=hive \

--set ilum-sql.enabled=true \

--set ilum-core.sql.enabled=true \

--set global.lineage.enabled=true

Capabilities enabled:

- Centralized Hive Metastore (compatible with Spark, Trino, DuckDB, Flink)

- Multi-engine SQL execution via Kyuubi (Spark and Trino out of the box; DuckDB available locally)

- Automatic lineage capture via OpenLineage, visualized in Marquez

- Table and column-level lineage in the Ilum UI

Resource overhead: ~8 GB RAM, 6 CPU cores for metadata, lineage, and SQL gateway services.

Option 2: Minimal Deployment

Minimal deployment for development and testing. Includes ilum-core, ilum-ui, ilum-api, Spark 4.x execution, DuckDB, and the DuckLake catalog. No external Hive Metastore or lineage tracking.

helm install ilum ilum/ilum

Use case: Development, testing, ephemeral workloads where table schemas are managed externally.

Option 3: Custom Module Selection

Use the module selector to generate Helm commands with specific integrations (Trino, Nessie, Unity Catalog, Airflow, Superset, MLflow, LangFuse, etc.). Optional modules can also be enabled and disabled at runtime through the in-product Modules registry, which is backed by the ilum-api microservice.

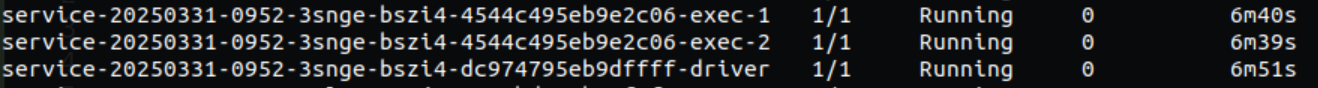

Deployment time: Services typically reach ready state within 2-6 minutes. Monitor with:

kubectl get pods -w

For advanced configuration options, see Helm chart documentation.

Installation Problems

In case you have any problems related to Ilum installation, vision troubleshooting section here or write us an email ([email protected]).

UI Access

The Ilum web interface provides job management, resource monitoring, and SQL query capabilities.

Default credentials: admin / admin

Minikube Service Exposure

minikube service ilum-ui

Returns cluster-accessible URL (e.g., http://192.168.49.2:31777).

NodePort (Default)

The UI service is exposed via NodePort on 31777 by default. Find your node IP:

kubectl get nodes -o wide

Access at http://<NODE_IP>:31777.

Port Forwarding (Development)

kubectl port-forward svc/ilum-ui 9777:9777

Access at http://localhost:9777.

Ingress Controller (Production)

For production deployments, configure an Ingress resource with TLS termination. See Ingress configuration guide for details.

Authentication:

- Default admin account:

admin/admin - Change credentials via Helm values or UI user management

- LDAP/OAuth2 integration available (see Security docs)

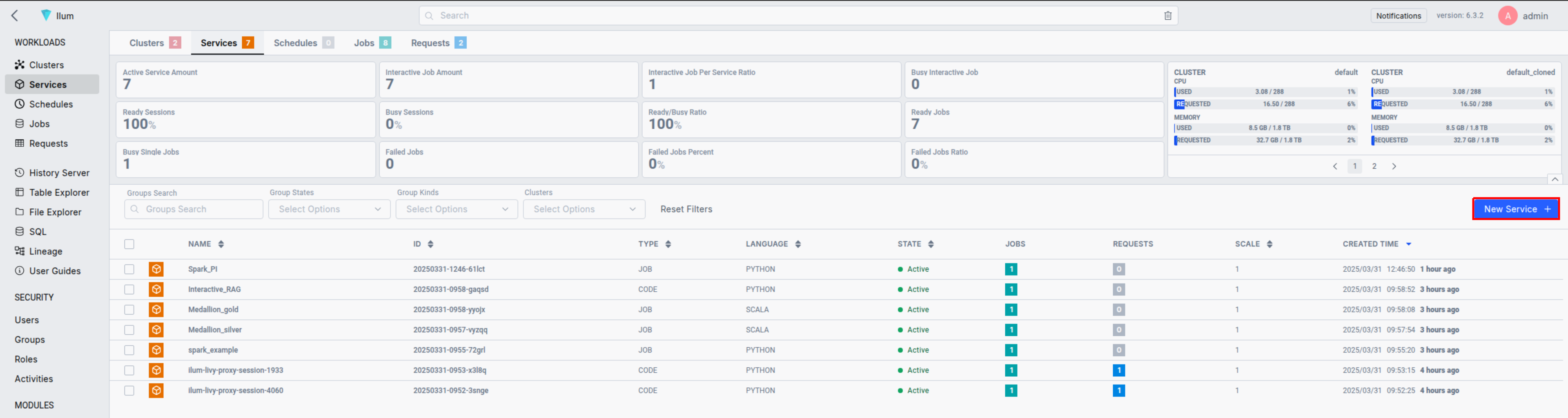

Submitting a Spark Application on UI

New to Ilum? Learn the fastest path from install → first job. Take the official Ilum Course.

Now that your Kubernetes cluster is configured to handle Spark jobs via Ilum, let's submit a Spark application. For this example, we'll use the "SparkPi" example from the Spark documentation. You can download the required JAR file from one of these links:

- Spark 4 (default)

- Spark 3

Spark 4 / Scala 2.13: spark-examples_2.13-4.1.1.jar

Spark 3 / Scala 2.12: spark-examples_2.12-3.5.7.jar

Ilum will create a Spark driver pod using the Spark 4.x docker image. The number of Spark executor pods can be scaled to multiple nodes as per your requirements.

And that's it! You've successfully set up Ilum and run your first Spark job. Feel free to explore the Ilum UI and API for submitting and managing Spark applications. For traditional approaches, you can also use the familiar spark-submit command.

Interactive Spark Job with Scala/Java

Interactive jobs in Ilum are long-running sessions that can execute job instance data immediately. This is especially useful as there's no need to wait for Spark context to be initialized every time. If multiple users point to the same job ID, they will interact with the same Spark context.

To enable interactive capabilities in your existing Spark jobs, you'll need to implement a simple interface to the part of your code that needs to be interactive. Here's how you can do it:

First, add the Ilum job API dependency to your project:

Gradle

implementation 'cloud.ilum:ilum-job-api:6.3.0'

Maven

<dependency>

<groupId>cloud.ilum</groupId>

<artifactId>ilum-job-api</artifactId>

<version>6.3.0</version>

</dependency>

sbt

libraryDependencies += "cloud.ilum" % "ilum-job-api" % "6.3.0"

Then, implement the Job trait/interface in your Spark job. Here's an example:

Scala

package interactive.job.example

import cloud.ilum.job.Job

import org.apache.spark.sql.SparkSession

class InteractiveJobExample extends Job {

override def run(sparkSession: SparkSession, config: Map[String, Any]): Option[String] = {

val userParam = config.getOrElse("userParam", "None").toString

Some(s"Hello ${userParam}")

}

}

Java

package interactive.job.example;

import cloud.ilum.job.Job;

import org.apache.spark.sql.SparkSession;

import scala.Option;

import scala.Some;

import scala.collection.immutable.Map;

public class InteractiveJobExample implements Job {

@Override

public Option<String> run(SparkSession sparkSession, Map<String, Object> config) {

String userParam = config.getOrElse("userParam", () -> "None");

return Some.apply("Hello " + userParam);

}

}

In this example, the run method is overridden to accept a SparkSession and a configuration map. It retrieves a user parameter from the configuration map and returns a greeting message.

You can find a similar example on GitHub.

By following this pattern, you can transform your Spark jobs into interactive jobs that can execute calculations immediately, improving user interactivity and reducing waiting times.

Interactive Spark Job with Python

Below is an example of how to configure an interactive Spark job in Python using the ilum library:

-

Spark Image Setup

a) Use a Docker image from DockerHub

Each Spark image we provide on DockerHub already has the necessary components built in.b) Install the

ilumpackage

If, for any reason, your Docker image does not include theilumpackage or if you build your own custom image, you can install it (either within the container or locally) by running:pip install ilum-job-api -

Job Structure in

ilum\The Spark job logic is encapsulated in a class that extends IlumJob, particularly within its run method

from ilum.api import IlumJob

class PythonSparkExample(IlumJob):

def run(self, spark, config):

# Job logic here

Simple interactive spark pi example:

from random import random

from operator import add

from ilum.api import IlumJob

class SparkPiInteractiveExample(IlumJob):

def run(self, spark, config):

partitions = int(config.get('partitions', '5'))

n = 100000 * partitions

def is_inside_unit_circle(_: int) -> float:

x = random() * 2 - 1

y = random() * 2 - 1

return 1.0 if x ** 2 + y ** 2 <= 1 else 0.0

count = (

spark.sparkContext.parallelize(range(1, n + 1), partitions)

.map(is_inside_unit_circle)

.reduce(add)

)

pi_approx = 4.0 * count / n

return f"Pi is roughly {pi_approx}"

You can find a similar example on GitHub.

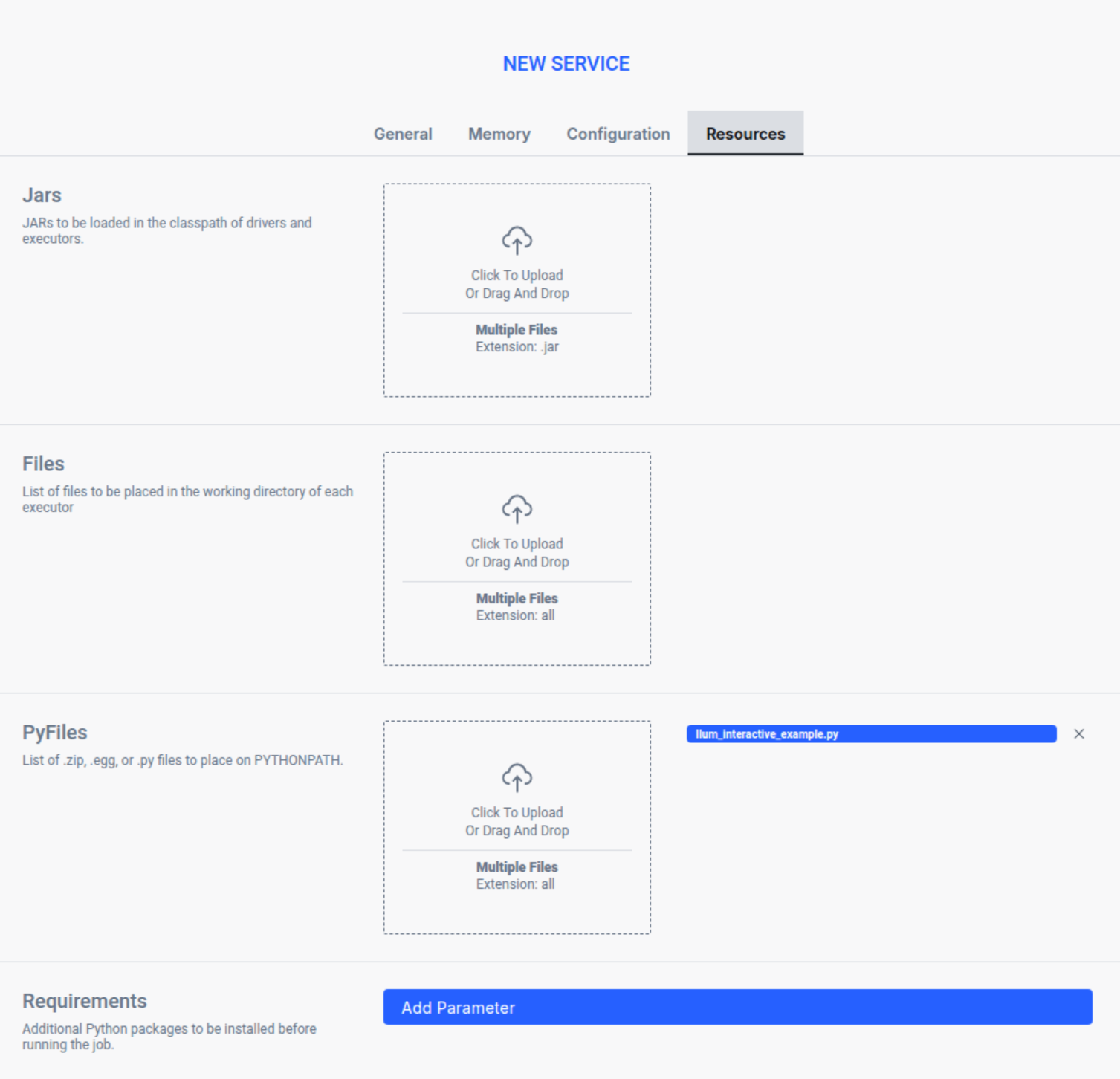

Submitting an Interactive Spark Job on UI

After creating a file that contains your Spark code, you will need to submit it to Ilum. Here's how you can do it:

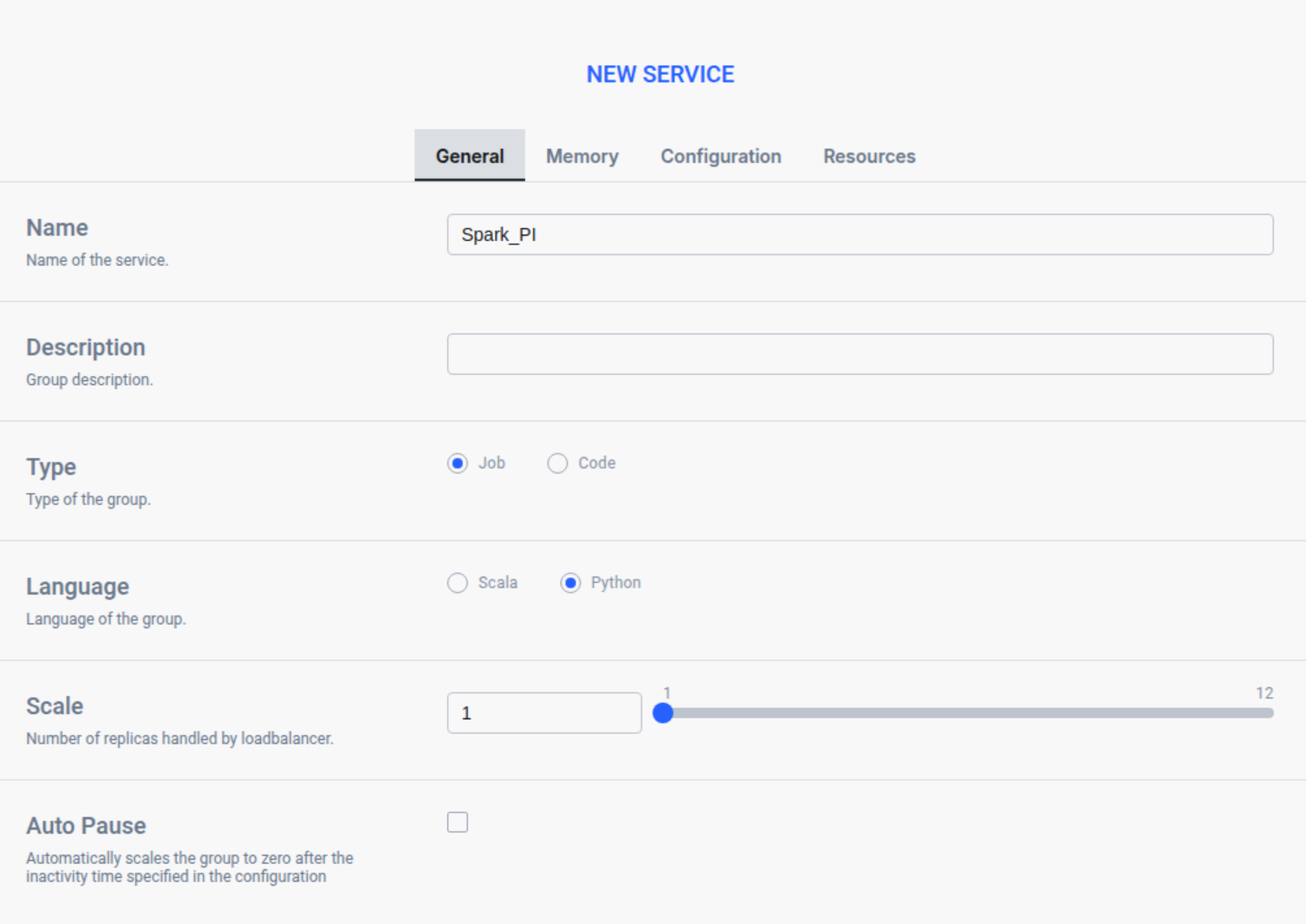

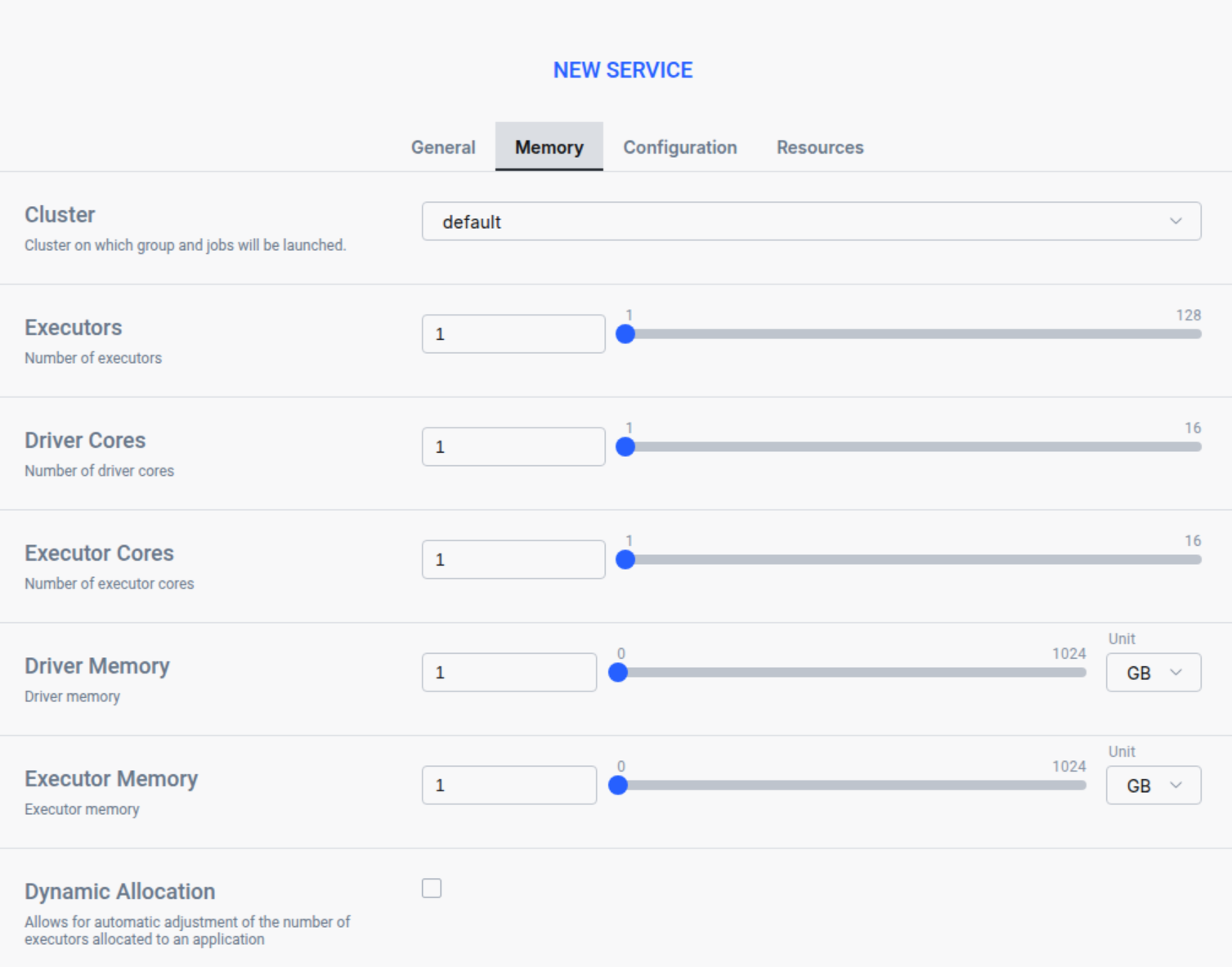

Open Ilum UI in your browser and create a new service:

In the General tab put a name of a service

In the Memory tab choose a cluster and set up your memory settings

In the Resource tab upload your spark file

Press Submit to apply your changes, and Ilum will automatically create a Spark driver pod. You can adjust the number of Spark executor pods by scaling them as needed.

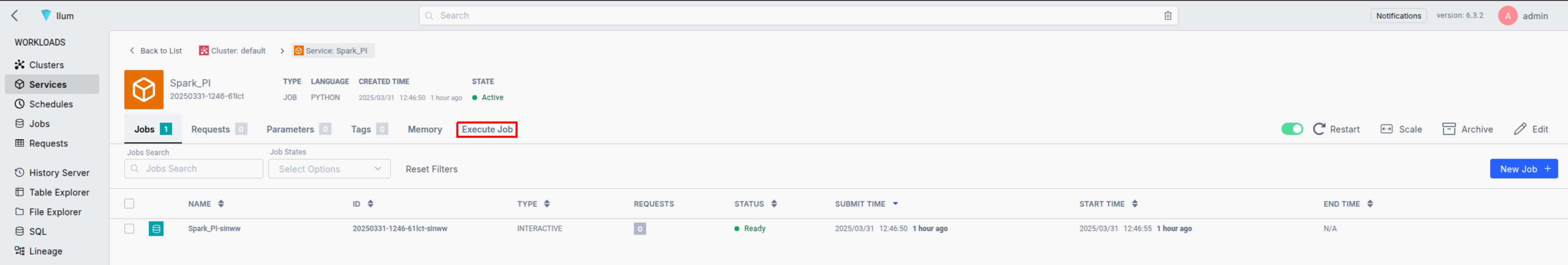

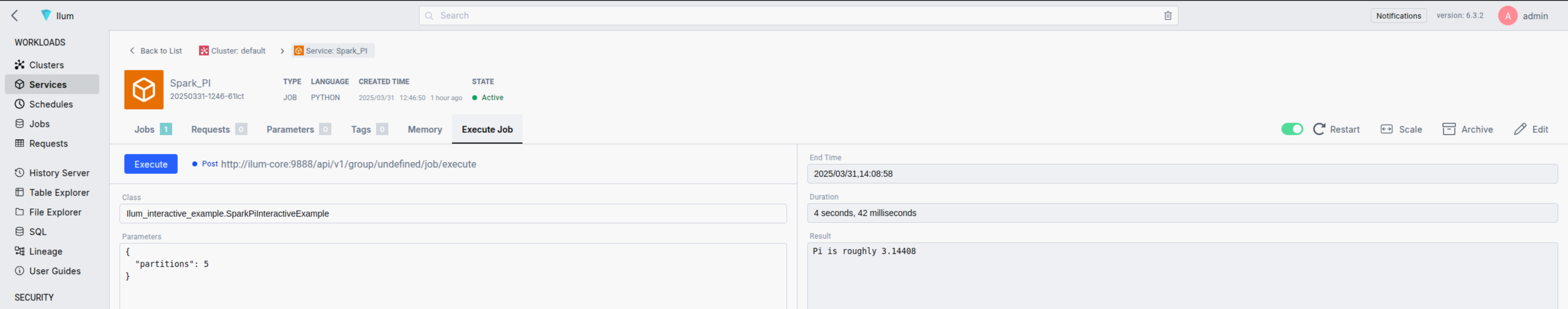

Next, go to the Workloads section to locate your job. By clicking on its name, you can access its detailed view. Once the Spark container is ready, you can run the job by specifying the filename.classname and defining any optional parameters in JSON format.

Now we have to put filename.classname in the Class filed:

Ilum_interactive_spark_pi.SparkPiInteractiveExample

and define the slices parameter in JSON format:

{

"partitions": 5

}

The first requests might take few seconds because of initialization phase, each another will be immediate.

By following these steps, you can submit and run interactive Spark jobs using Ilum. This functionality provides real-time data processing, enhances user interactivity, and reduces the time spent waiting for results.