Create and Connect a Remote GKE Cluster to Data Lakehouse

Introduction

Ilum empowers you to manage a powerful multi-cluster setup from a single, central control plane. While Ilum automates the deployment and configuration of its core components, setting up the underlying infrastructure requires precise coordination.

This guide provides a comprehensive walkthrough for setting up a multi-cluster architecture on Google Kubernetes Engine (GKE). You will learn how to:

- Provision a central control plane (Master Cluster).

- Set up a dedicated execution environment (Remote Cluster).

- Establish secure communication between them using client certificates and ingress rules.

This guide walks you through the steps required to launch your first Ilum Job on a remote cluster. We use Google Kubernetes Engine (GKE) as an example, but you can follow the same flow with any Kubernetes distribution.

Prerequisites

Before starting the tutorial, make sure you have:

- Access to a Google Cloud project with billing enabled.

- kubectl installed and configured on your machine (version compatible with your GKE cluster).

- Helm installed (v3+).

- The Google Cloud CLI (

gcloud) installed and initialized (you can rungcloud auth loginandgcloud config listwithout errors). - The

gke-gcloud-auth-plugininstalled and available in yourPATHso thatkubectlcan authenticate to GKE clusters. - Permissions in the target Google Cloud project to:

- create and manage GKE clusters (e.g. Kubernetes Engine Cluster Admin or equivalent),

- create and use Cloud Storage buckets if you plan to use GCS for data.

What you'll accomplish in this guide:

| Step | Task | Purpose |

|---|---|---|

| 1 | Create two GKE clusters | Set up master (control plane) and remote (job execution) |

| 2 | Install Ilum on master | Deploy Ilum's core components |

| 3 | Set up authentication | Create secure credentials for remote cluster access |

| 4 | Register remote cluster | Add cluster to Ilum's management interface |

| 5 | Configure networking | Enable communication between clusters |

| 6 | Run your first job | Verify the multi-cluster setup works |

Step 1. Provision Master and Remote GKE Clusters

The foundation of a multi-cluster setup consists of two distinct entities:

- Master Cluster: Hosts the Ilum control plane (UI, API, Scheduler).

- Remote Cluster: dedicated environment where Ilum executes the Spark Jobs dispatched from the master.

Create a project

- Open Google Cloud Console.

- Click the Project selector in the top-left corner.

- Click New Project.

- Enter a project name and (if applicable) select an Organization/Folder.

- Click Create.

- Select the newly created project in the project selector.

Enable Google Kubernetes Engine API

- In the Console search bar, type Kubernetes Engine.

- Open Kubernetes Engine.

- Click Enable to enable the Google Kubernetes Engine API for the selected project.

Switch to the chosen project in gcloud

- In the Console, open the project selector and copy the Project ID.

- In your terminal, set this project as active:

gcloud config set project PROJECT_ID

- (Optional) If you plan to create clusters in a specific region often, set a default region:

gcloud config set compute/region europe-central2

This avoids errors requiring --region / --zone.

Create a cluster

Create the master cluster first:

gcloud container clusters create master-cluster \

--machine-type=n1-standard-8 \

--num-nodes=1

Create the remote cluster with a different name:

gcloud container clusters create remote-cluster \

--machine-type=n1-standard-4 \

--num-nodes=1

Resource Requirements & Architecture:

Why two clusters? The master cluster runs Ilum's control plane (UI, API, scheduler). The remote cluster executes your Spark jobs. This separation allows independent scaling and multi-cluster management from one interface.

Sizing:

- Master cluster: This example uses

n1-standard-8(8 vCPU, 30 GB RAM) for testing only. Minimum recommended: 12 vCPUs and 48 GB RAM (e.g.,n1-standard-12). Production environments with many users need significantly more. - Remote cluster: This example uses

n1-standard-4(4 vCPU, 15 GB RAM) for testing only. Production workloads require larger machines (e.g.,n1-standard-16+) and multiple nodes depending on your Spark job requirements.

Step 2. Install Ilum Control Plane on Master Cluster

Once your clusters are running, the next step is to deploy the Ilum platform on the master cluster.

Switch to the master cluster config in kubectl

# get kubectl contexts and find the one with your cluster

kubectl config get-contexts

# switch to it using this command

kubectl config use-context MASTER_CLUSTER_CONTEXT

Create namespace and switch to it in kubectl

kubectl create namespace ilum

kubectl config set-context --current --namespace=ilum

Install Ilum using Helm charts:

helm repo add ilum https://charts.ilum.cloud

helm install ilum -n ilum ilum/ilum

What just happened? You've installed Ilum's control plane on the master cluster. You can now access Ilum's interface to manage jobs across multiple clusters.

Step 3. Configure Authentication for Remote Cluster Access

Goal: Generate secure credentials (client certificates) that authorize the Ilum control plane to deploy and manage resources on the remote cluster.

Important: All steps in this section must be performed while connected to the remote cluster (not the master cluster).

Why this is needed:

When you created the remote cluster with gcloud, it was automatically added to your kubeconfig with your Google Cloud account credentials. However, Ilum doesn't have access to your Google account.

Ilum needs its own authentication method - client certificates - to connect to and manage the remote cluster. This section creates those certificates.

| Step | Action | Purpose |

|---|---|---|

| 1 | Create Client Key | Generate private key for authentication |

| 2 | Create CSR | Request identity verification from cluster |

| 3 | Register & Approve CSR | Cluster admin validates and signs request |

| 4 | Get Signed Certificate | Retrieve the "ID card" for Ilum |

| 5 | Grant Admin Permissions | Authorize Ilum to manage cluster resources |

| ✓ | Result | Ilum can securely manage the cluster |

This is similar to how kubectl authenticates to clusters, but we're doing it programmatically for Ilum.

Before you start: Make sure you're connected to the remote cluster context:

kubectl config use-context gke_<your-project>_<region>_remote-cluster

You can verify with: kubectl config current-context

Step 3.1: Create a Client Key

What we're doing: Generating a private key that will be used for authentication.

First, create a dedicated directory for the certificates and work there:

mkdir -p ~/remote-cluster

cd ~/remote-cluster

Now generate the private key:

openssl genpkey -algorithm RSA -out client.key

Security: This private key is like a password. Keep client.key secure and never commit it to version control.

Step 3.2: Create Certificate Request

What we're doing: Creating a Certificate Signing Request (CSR) that asks the remote cluster to verify and sign our identity.

Replace myuser with the username of your choice:

openssl req -new -key client.key -out csr.csr -subj "/CN=myuser"

What is a CSR? A Certificate Signing Request asks the Kubernetes cluster to create a signed certificate for your user. The cluster will verify and sign it, creating a trusted identity.

Step 3.3: Encode the CSR

What we're doing: Converting the CSR to base64 format, which is required by Kubernetes.

cat csr.csr | base64 | tr -d '\n'

Copy the output - you'll need it in the next step.

Step 3.4: Register CSR in Kubernetes

What we're doing: Submitting the CSR to the remote cluster for approval.

Create csr.yaml (replace myuser and paste your encoded CSR):

apiVersion: certificates.k8s.io/v1

kind: CertificateSigningRequest

metadata:

name: myuser # Replace with your username

spec:

request: <paste your base64 encoded CSR here>

signerName: kubernetes.io/kube-apiserver-client

usages:

- client auth

Apply the CSR to the remote cluster:

kubectl apply -f csr.yaml

Step 3.5: Approve and Retrieve the Certificate

What we're doing: As cluster admin, we approve our own CSR and retrieve the signed certificate.

Approve the CSR:

kubectl certificate approve myuser

Retrieve the signed certificate:

kubectl get csr myuser -o jsonpath='{.status.certificate}' | base64 --decode > client.crt

What happened? You've registered your certificate request with Kubernetes, approved it (as cluster admin), and retrieved the signed certificate. This certificate + private key pair is now your authentication credential for the remote cluster.

Step 3.6: Get CA Certificate

What we're doing: Extracting the cluster's CA certificate. This will be needed for the optional test in the next section, and later in Step 4 when adding the cluster to Ilum.

Get and save the CA certificate:

kubectl config view --minify --raw -o jsonpath='{.clusters[0].cluster.certificate-authority-data}' | base64 --decode > ca.crt

Verify the certificate was saved correctly:

openssl x509 -in ca.crt -text -noout | head -n 5

Why do we need this?

- CA Certificate: Verifies the cluster's identity (prevents man-in-the-middle attacks)

- Flags:

--minifygets only current context,--rawgets actual data instead of file paths

Step 3.7: Grant Permissions with Role Binding

What we're doing: Giving the certificate user (myuser) admin permissions on the remote cluster.

Create rolebinding.yaml:

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: myuser-admin-binding

subjects:

- kind: User

name: myuser # Match your username

apiGroup: rbac.authorization.k8s.io

roleRef:

kind: ClusterRole

name: cluster-admin

apiGroup: rbac.authorization.k8s.io

Apply the role binding to the remote cluster:

kubectl apply -f rolebinding.yaml

Security: We're granting cluster-admin for simplicity. In production, create a custom role with only the permissions Ilum needs:

- Create/delete pods, services, configmaps

- Read secrets

- Manage persistent volumes

Step 3.8: Configure Service Account Permissions

What we're doing: Granting permissions to the ServiceAccount that Spark pods will use.

What is a Service Account? It's the Kubernetes equivalent of a user for pods. Spark driver pods use it to create executor pods and manage resources in the cluster.

By default, pods use the default ServiceAccount in their namespace, but it doesn't have sufficient privileges to create other pods.

Create sa_role_binding.yaml:

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: ilum-default-admin-binding

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: cluster-admin

subjects:

- kind: ServiceAccount

name: default

namespace: ilum # Namespace where Spark jobs will run

Apply the role binding to the remote cluster:

kubectl apply -f sa_role_binding.yaml

Production Security: We're granting cluster-admin to the default ServiceAccount for simplicity. In production:

- Create a dedicated ServiceAccount (e.g.,

spark-driver) with minimal permissions - Specify it in your cluster configuration:

spark.kubernetes.authenticate.driver.serviceAccountName=spark-driver - Grant only the permissions needed: create/delete pods, read configmaps/secrets, etc.

(Optional) Test the Client Certificates

Why this step? This optional step verifies that the certificates work correctly before we give them to Ilum in Step 4.

We'll create a temporary kubectl context that uses the certificates (instead of your Google Cloud credentials) to confirm they have the correct permissions.

1. Verify certificates are in the directory:

All certificate files should already be in ~/remote-cluster/ from the previous steps:

ls ~/remote-cluster/

# You should see: ca.crt client.crt client.key csr.csr csr.yaml

2. Get your remote cluster name:

kubectl config view -o jsonpath='{.clusters[*].name}' | tr ' ' '\n' | grep remote

You should see something like: gke_my-project_europe-central2_remote-cluster

Copy this cluster name.

3. Add the certificate-based user to kubectl:

kubectl config set-credentials myuser-cert \

--client-certificate=$HOME/remote-cluster/client.crt \

--client-key=$HOME/remote-cluster/client.key

This creates a new user in your kubeconfig that uses certificates instead of your Google account.

4. Create a test context:

kubectl config set-context test-remote-certs \

--cluster=<paste-your-remote-cluster-name-here> \

--namespace=default \

--user=myuser-cert

Replace <paste-your-remote-cluster-name-here> with the cluster name from step 2.

5. Test the certificate-based authentication:

kubectl config use-context test-remote-certs

kubectl get pods

Expected output:

No resources found in default namespace.

If you see this (or a list of pods), the certificates work! ✅

6. Switch back to your normal context:

# Find your original context

kubectl config get-contexts

# Switch back (replace with your actual context name)

kubectl config use-context gke_<your-project>_<region>_remote-cluster

What we just verified: The client certificates can successfully authenticate to the remote cluster with admin permissions. In Step 4, we'll provide these same certificates to Ilum so it can manage the cluster.

Step 4. Register the Remote Cluster in Ilum UI

With your certificates generated and permissions granted, you are now ready to connect the remote cluster to the Ilum control plane.

What you'll provide: All the credentials and connection details you created in Step 3:

| Item | File/Value | Purpose |

|---|---|---|

| CA Certificate | ca.crt | Verifies cluster identity |

| Client Certificate | client.crt | Your authentication credential |

| Client Key | client.key | Private key for authentication |

| Server URL | From kubectl | Cluster API endpoint |

| Username | myuser | User identity |

Access Ilum UI

Before you can add the remote cluster to Ilum, you need to access the Ilum web interface.

1. Switch to the master cluster context:

kubectl config use-context gke_<your-project>_<region>_master-cluster

You can verify with: kubectl config current-context

2. Set up port forwarding to Ilum UI:

kubectl port-forward -n ilum svc/ilum-ui 9777:9777

Keep this terminal window open - it needs to run continuously.

3. Open Ilum in your browser:

Open http://localhost:9777 in your web browser.

4. Log in:

- Username:

admin - Password:

admin

Now you're ready to add the remote cluster to Ilum!

Demo: Adding a Kubernetes Cluster

Go to cluster creation page

- Go to Clusters section in the Workload

- Click New Cluster button

Specify general settings:

- Choose a name and a description that you want.

- Set cluster type to Kubernetes

- Choose a spark version

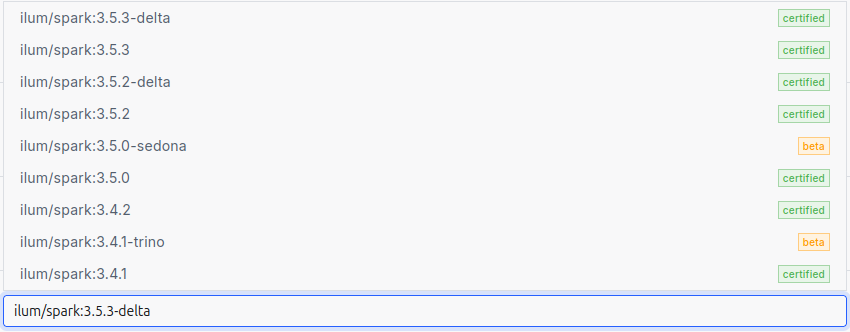

The Spark version is specified by selecting an image for the Spark jobs.

Ilum requires the use of its own images, which include all the necessary dependencies preinstalled.

Here is a list of all available images:

Specify spark configurations

The Spark configurations that you specify at the cluster level will be applied to every individual Ilum job deployed on that cluster.

This can be useful if you want to avoid repeating configurations. For example, if you are using Iceberg as your Spark catalog, you can configure it once at the cluster level, and then all Ilum jobs deployed on this cluster will automatically use these configurations.

Important: Set the namespace for Spark jobs

In the Parameters section, add:

spark.kubernetes.namespace: ilum

This ensures all Spark jobs run in the ilum namespace where you created the external services (ilum-core, ilum-grpc, ilum-minio) in Step 5. Without this, jobs would run in default namespace and couldn't connect to master cluster services.

Add storages

Default cluster storage will be used by Ilum to store all the files required to run Ilum Jobs both provided by user and by Ilum itself.

You can choose any type of storage: S3, GCS, WASBS, HDFS.

For detailed instructions on setting up storage (especially GCS), see the Create Storage guide.

Quick setup in the UI:

- Click on the "Add storage" button

- Specify the name and choose the storage type (S3, GCS, etc.)

- Choose Spark Bucket - main bucket used to store Ilum Jobs files

- Choose Data Bucket - required in case you use Ilum Tables spark format

- Specify storage endpoint and credentials

- Click on the "Submit" button

You can add any number of storages, and Ilum Jobs will be configured to use each one of them.

Go to Kubernetes section and specify kubernetes configurations

Here, we provide Ilum with all the required details to connect to our Kubernetes cluster. The process is similar to connecting to the cluster through the kubectl tool, but here it is done through the UI.

You will need to provide the following:

| Field | What to provide | File location |

|---|---|---|

| Url | Cluster API endpoint (text field) | ⚠️ Switch to remote cluster first, then: kubectl config view --minify -o jsonpath='{.clusters[0].cluster.server}' |

| CaCert | Upload CA certificate file | ~/remote-cluster/ca.crt (from Step 3.6) |

| ClientKey | Upload client private key file | ~/remote-cluster/client.key (from Step 3.1) |

| ClientCert | Upload client certificate file | ~/remote-cluster/client.crt (from Step 3.5) |

| Username | Username (text field) | myuser (or whatever you used in Step 3.2) |

Once you have specified these items, Ilum will be able to access your Kubernetes cluster.

In addition, you can:

- Configure Kubernetes to require a password for your user. In this case, you will need to specify the password here in the UI.

- Add a passphrase when creating the client key. If you did so, you will need to specify the passphrase here in the UI.

- Specify the key algorithm used in the client.key. This is not obligatory, as this information is usually stored within the key itself. However, there may be cases where you need to explicitly define it.

Finally, you can click on the Submit button to add a cluster.

Finally, you can click on the Submit button to add a cluster.

Step 5. Configure Multi-Cluster Networking

The Challenge: While Ilum can now dispatch jobs to the remote cluster, those jobs need a way to report their status, logs, and metrics back to the master cluster.

Why jobs need to communicate:

| Component | Action | Target Service | Location |

|---|---|---|---|

| Spark Jobs | Send status updates | Ilum Core (gRPC) | Master Cluster |

| Spark Jobs | Send metrics & logs | MinIO Event Log | Master Cluster |

| Scheduled Jobs | Trigger new jobs | Ilum Core | Master Cluster |

The Solution: Expose Ilum's services from the master cluster and create DNS aliases in the remote cluster.

Step 5.1: Configure Firewall

First of all, you need to allow for incoming traffic into your GKE master-cluster. The Google Cloud Project the traffic access is managed by its firewall. The firewall can be managed by rules. To allow remote-cluster to access master-cluster services, we need to add this rule to the project of our master-cluster:

gcloud compute firewall-rules create allow-ingress-traffic \

--network default \

--direction INGRESS \

--action ALLOW \

--rules tcp:80,tcp:443 \

--source-ranges 0.0.0.0/0 \

--description "Allow HTTP/HTTPS"

Security Warning: --source-ranges 0.0.0.0/0 allows traffic from anywhere. In production, restrict this to your remote cluster's IP range:

--source-ranges 10.0.0.0/8 # Example: your cluster's CIDR

Step 5.2: Expose Services from Master Cluster

What is LoadBalancer? It's a Kubernetes service type that provisions a public IP address, making the service accessible from outside the cluster (including from your remote cluster).

You need to expose services in the master cluster to the outside world. To achieve this, change their type to LoadBalancer. Ilum makes this process straightforward using Helm configurations.

To expose Ilum Core, gRPC, and MinIO to the outside world, run the following command in your terminal:

helm upgrade ilum -n ilum ilum/ilum \

--set ilum-core.service.type="LoadBalancer" \

--set ilum-core.grpc.service.type="LoadBalancer" \

--set minio.service.type="LoadBalancer" \

--reuse-values

After that you should wait a few minutes and then check your services by running

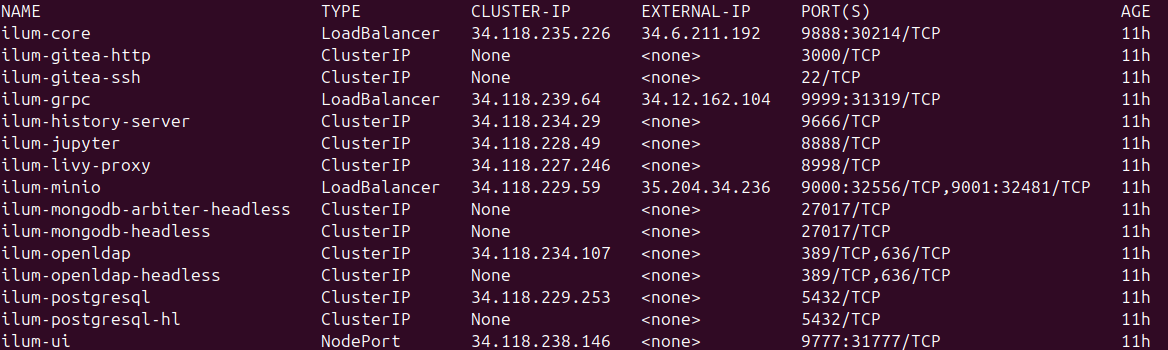

kubectl get services

to see this:

Here you can notice the ilum-core, ilum-grpc and minio services changed their type to LoadBalancer and got public IP.

Now you can go to http://<public-ip>:9888/api/v1/group to check if everything is okay.

Step 5.3: Create External Services in Remote Cluster

What is ExternalName? It creates a local DNS alias in the remote cluster. When a job tries to connect to ilum-core:9888, Kubernetes redirects it to the public IP. Jobs use familiar service names as if everything was in one cluster.

Switch to remote cluster context:

Before creating external services, make sure you're working on the remote cluster:

kubectl config use-context gke_<your-project>_<region>_remote-cluster

Create external_services.yaml in the remote cluster:

Use the EXTERNAL-IP addresses from the kubectl get services output in your master cluster (the public IPs shown in the screenshot above). Replace the placeholders below with these actual IP addresses.

apiVersion: v1

kind: Service

metadata:

name: ilum-core

namespace: ilum # Must match the namespace where jobs will run

spec:

type: ExternalName

externalName: 34.118.72.123 # Replace with actual EXTERNAL-IP from master cluster

ports:

- port: 9888

targetPort: 9888

---

apiVersion: v1

kind: Service

metadata:

name: ilum-grpc

namespace: ilum # Must match the namespace where jobs will run

spec:

type: ExternalName

externalName: 34.118.72.124 # Replace with actual EXTERNAL-IP from master cluster

ports:

- port: 9999

targetPort: 9999

---

apiVersion: v1

kind: Service

metadata:

name: ilum-minio

namespace: ilum # Must match the namespace where jobs will run

spec:

type: ExternalName

externalName: 34.118.72.125 # Replace with actual EXTERNAL-IP from master cluster

ports:

- port: 9000

targetPort: 9000

Before applying:

- Replace the example IPs (

34.118.72.123, etc.) with your actual EXTERNAL-IP addresses fromkubectl get servicesin the master cluster - Make sure the

namespace: ilummatches the namespace where your Spark jobs will run in the remote cluster - If you haven't created the

ilumnamespace in the remote cluster yet, create it first:Create Namespacekubectl create namespace ilum

Apply the external services:

kubectl apply -f external_services.yaml

Multi-Cluster Bridge Complete: Jobs running on the remote cluster can now reach Ilum's services on the master cluster using familiar service names (ilum-core, ilum-grpc, ilum-minio), even though they're actually connecting over the internet via public IPs.

Components in Multi-Cluster Architecture

Components compatible with multi-cluster (with additional networking):

| Component | Purpose | Requires Exposure |

|---|---|---|

| Hive Metastore | Metadata management for tables | ✅ Yes |

| Marquez | Data lineage tracking | ✅ Yes |

| History Server | Spark application history | ✅ Yes |

| Graphite | Metrics collection | ✅ Yes |

Components restricted to single-cluster:

| Component | Reason |

|---|---|

| Kube Prometheus Stack | Prometheus needs direct pod access for metrics scraping. Challenging with dynamic pods across clusters. |

| Loki and Promtail | Promtail collects logs similarly to Prometheus. Same multi-cluster limitations. |

All other Ilum services not listed above are cluster-independent and work in both single- and multi-cluster setups.

Step 6. Verify the Multi-Cluster Setup

The final step is to validate your configuration by running a real Spark job on the remote cluster.

Step 1: Access Ilum UI

-

Switch to master cluster context:

kubectl config use-context gke_<your-project>_<region>_master-cluster -

Set up port-forwarding:

kubectl port-forward -n ilum svc/ilum-ui 9777:9777Keep this terminal window open.

-

Open Ilum in browser:

- Navigate to

http://localhost:9777 - Login with default credentials:

admin:admin

- Navigate to

Step 2: Create a Test Job

-

Navigate to Jobs section in Ilum UI

-

Click "New Job +" button

-

Configure the job:

- Name:

RemoteClusterTest - Job Type:

Spark Job - Cluster: Select your remote cluster (not master)

- Class:

org.apache.spark.examples.SparkPi - Language:

Scala

- Name:

-

Add Resources:

- Go to Resources tab

- Jars: Upload one of these:

- Spark 4 / Scala 2.13 (default): spark-examples_2.13-4.1.1.jar

- Spark 3 / Scala 2.12: spark-examples_2.12-3.5.7.jar

-

Submit the job

Step 3: Verify Execution

If everything is configured correctly:

✅ Job starts successfully - Pods are created in the remote cluster

✅ Logs appear - You can see Spark initialization and execution logs

✅ Job completes - Final output shows: Pi is roughly 3.14...

Check remote cluster pods:

kubectl config use-context gke_<your-project>_<region>_remote-cluster

kubectl get pods -n ilum

You should see Spark driver and executor pods running or completed.

For detailed job configuration options, see the Run Simple Spark Job guide.

Troubleshooting & FAQ

Here are solutions to common issues you might encounter when connecting a remote GKE cluster.

Why is my Spark Job stuck in "Pending" state?

This usually happens if the remote cluster lacks resources or can't pull images.

- Check Resources: Ensure your node pool has enough CPU/RAM.

- Check Events: Run

kubectl get events -n ilumon the remote cluster to see scheduling errors.

Why can't the remote cluster connect to Ilum Core?

If the job runs but fails to report status:

- Verify Firewall: Ensure the master cluster's firewall allows ingress on ports 9888 (Core), 9999 (gRPC), and 9000 (MinIO).

- Check DNS: Verify the

ExternalNameservices in the remote cluster resolve to the master's public IP.

Can I use a private GKE cluster?

Yes, but you will need to configure VPC Peering or a VPN between the master and remote networks instead of using public LoadBalancers.

Next Steps

Congratulations! You've successfully set up a robust multi-cluster Ilum environment. You can now:

- Deploy Spark jobs to your remote cluster from the Ilum UI

- Monitor job execution across all clusters from one interface

- Scale horizontally by adding more remote clusters using the same process

- Optimize costs by using different machine types for different workloads

Production Checklist:

- Restrict firewall rules to specific IP ranges

- Create custom RBAC roles instead of cluster-admin

- Set up TLS certificates for LoadBalancer services

- Configure resource quotas and limits

- Enable monitoring and alerting

- Document your cluster configurations