Handling Spark Dependencies in Ilum

Ilum provides three methods to handle dependencies for Spark on Kubernetes, each suited for different use cases ranging from rapid prototyping to stable production environments.

Comparison of Dependency Management Methods

| Method | Best For | Persistence | Startup Speed |

|---|---|---|---|

| Custom Docker Image | Production, Large dependencies, Security | High (Immutable) | Fast (Pre-built) |

| Runtime Injection | Testing, PoCs, Small/Transient libs | Medium (Cached) | Slower (Downloads at startup) |

Notebook pip install | Ad-hoc Experiments, Exploration | None (Session only) | Slowest (Repeated installs) |

1. Dedicated Docker Image (Production Best Practice)

This method involves creating a custom Docker image that includes all required dependencies. It ensures consistency across environments and is the best approach for production workloads.

Steps to Create a Custom Spark Image

- Start with the official Ilum Spark base image.

- Add necessary JARs for any Java-based dependencies.

- Install required Python packages.

- Build and push the image to a private or public registry.

- Configure Ilum to use this new image.

Example: Adding Apache Iceberg Support

Below is an example Dockerfile that builds on the Ilum Spark base image and adds support for Apache Iceberg:

FROM ilum/spark:3.5.7

USER root

# Add JARs for Iceberg support

ADD https://repo1.maven.org/maven2/org/apache/iceberg/iceberg-spark-runtime-3.5_2.12/1.8.0/iceberg-spark-runtime-3.5_2.12-1.8.0.jar $SPARK_HOME/jars

# Install Python dependencies

RUN python3 -m pip install pandas pyiceberg[hive,s3fs,pandas,snappy,gcsfs,adlfs]

USER ${spark_uid}

Build and Push the Image

After writing the Dockerfile (for example, saved as Dockerfile in the current directory), build and push the image:

docker build -t myPrivateRepo/spark:3.5.7-iceberg .

docker push myPrivateRepo/spark:3.5.7-iceberg

Configuring Ilum to Use the Custom Image

Once the image is available in a container registry, update Ilum to use this custom Spark image:

- UI (Job & Service)

- Helm (Install Time)

- REST API

- Global Default (Cluster Config)

Per-Job/Service Setting: When submitting a Spark job or Service, specify the image by setting this param:

spark.kubernetes.container.image: myPrivateRepo/spark:3.5.7-iceberg

During the installation process: Add this flag to your helm install command:

--set ilum-core.kubernetes.defaultCluster.config.spark\\.kubernetes\\.container\\.image="myPrivateRepo/spark:3.5.7-iceberg"

When submitting a job programmatically, verify the image parameter:

curl -X POST "http://ilum-core/api/v1/job/submit" \

-F "name=my-custom-job" \

-F "image=registry.example.com/my-team/spark-custom:v1" \

...

You can set the default image for the entire cluster via the UI using one of two methods.

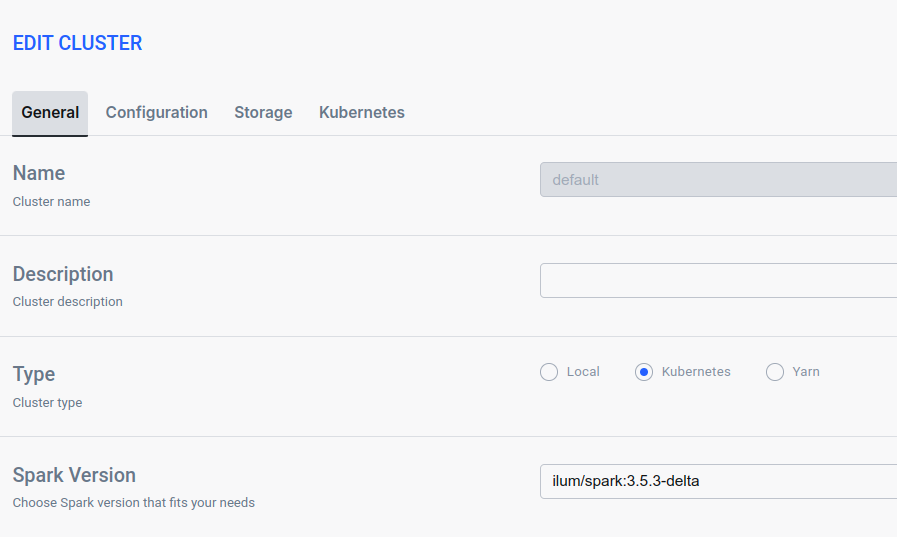

Option A: General Tab (Spark Version)

Navigate to the General tab of your cluster settings. Locate the Spark Version field and enter your custom image tag (e.g., myPrivateRepo/spark:3.5.7-iceberg).

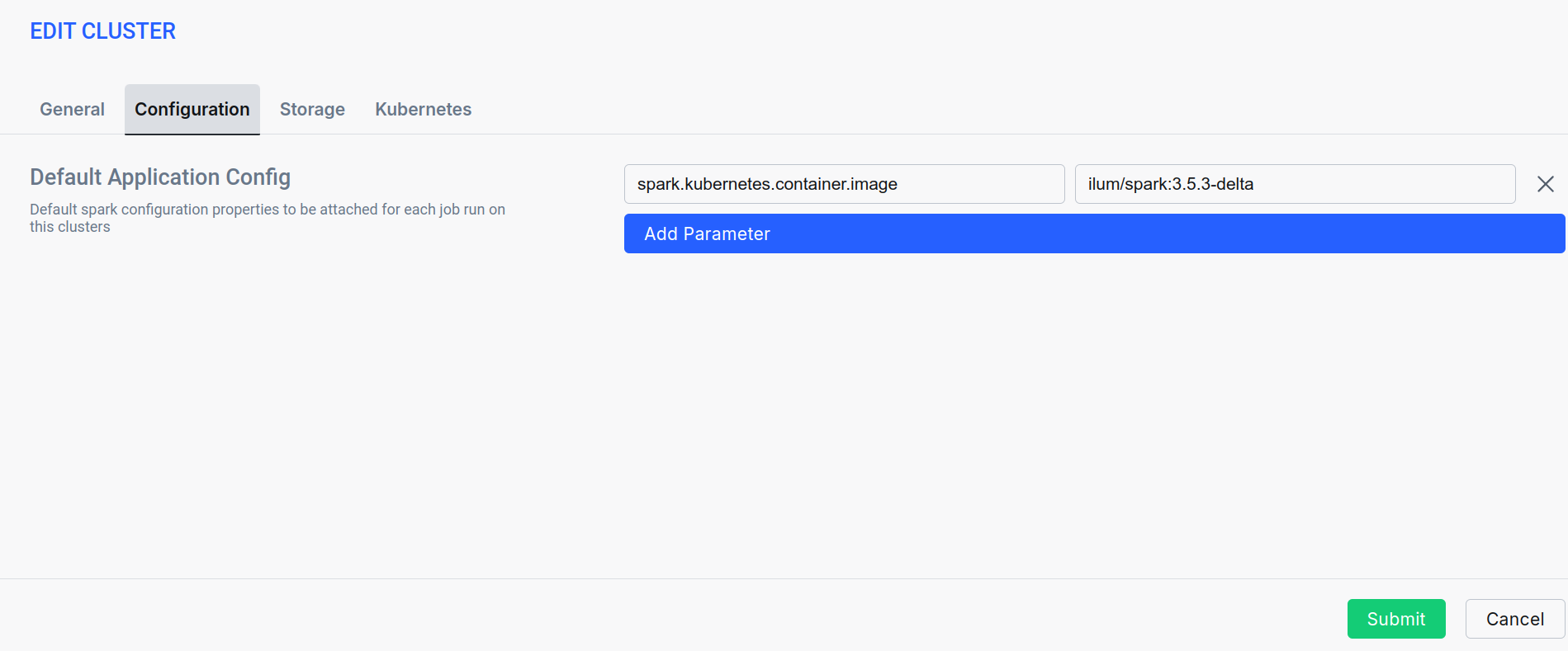

Option B: Configuration Tab

Navigate to the Configuration tab. Add a new parameter spark.kubernetes.container.image and set its value to your custom image.

Best Practices

- Keep all dependency versions aligned with the Spark version used.

- Regularly update the custom image to include security patches and the latest dependency versions.

- Store images in a reliable and accessible container registry.

- Use a versioning scheme for your images (e.g., include Spark and feature versions in the tag).

Troubleshooting

Common Image Issues

| Issue | Solution |

|---|---|

| Dependency mismatch | Ensure all JARs and Python packages are compatible with the Spark version in use. |

| Image not found | Verify the image name and that it was pushed to the correct registry (and that Ilum has access to that registry). |

| Job fails due to missing dependencies | Double-check that the Spark job is using the intended custom image (check the image configuration in Ilum or the spark-submit command). |

2. Runtime Injection (Spark Packages & PyPI)

For rapid development and testing, you can add dependencies dynamically using Spark’s configuration. This approach fetches JARs and installs Python packages at startup time.

Adding Java JARs

Specify Maven coordinates for Java dependencies using the spark.jars.packages configuration.

- UI (Job & Service)

- Helm (Install Time)

- Global Default (Cluster Config)

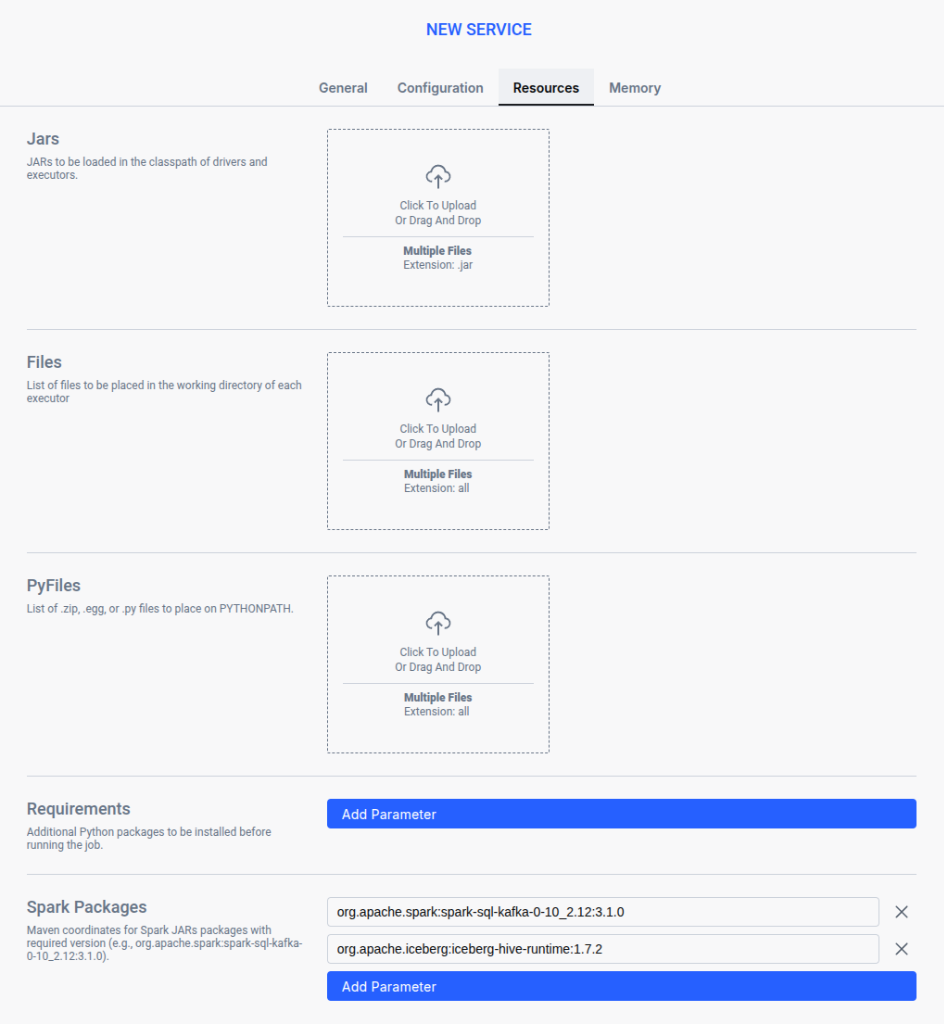

For individual Jobs or Services, you can add packages directly in the Resources tab.

- Navigate to New Job or New Service.

- Go to the Resources tab.

- Scroll to Spark Packages.

- Click Add Parameter and enter the Maven coordinate (e.g.,

org.apache.hadoop:hadoop-aws:3.3.4).

During the installation process: Add this flag to your helm install command:

--set ilum-core.kubernetes.defaultCluster.config.spark\\.jars\\.packages="org.apache.iceberg:iceberg-spark-runtime-3.5_2.12:1.8.0,org.apache.hadoop:hadoop-aws:3.3.4"

To define default packages for all jobs on a cluster, set the property in the Cluster Configuration.

Runtime: Set this in the Cluster Configuration form:

spark.jars.packages: org.apache.iceberg:iceberg-spark-runtime-3.5_2.12:1.8.0,org.apache.hadoop:hadoop-aws:3.3.4

Spark will automatically download the specified package (and its dependencies) from Maven Central or the configured repository when the job starts.

Installing Python Dependencies in Ilum

Ilum provides multiple ways to install Python dependencies for Spark jobs and Jupyter sessions. Depending on your use case, you can choose between:

- UI (Job & Service)

- Jupyter Session

- Global Default (Cluster Config)

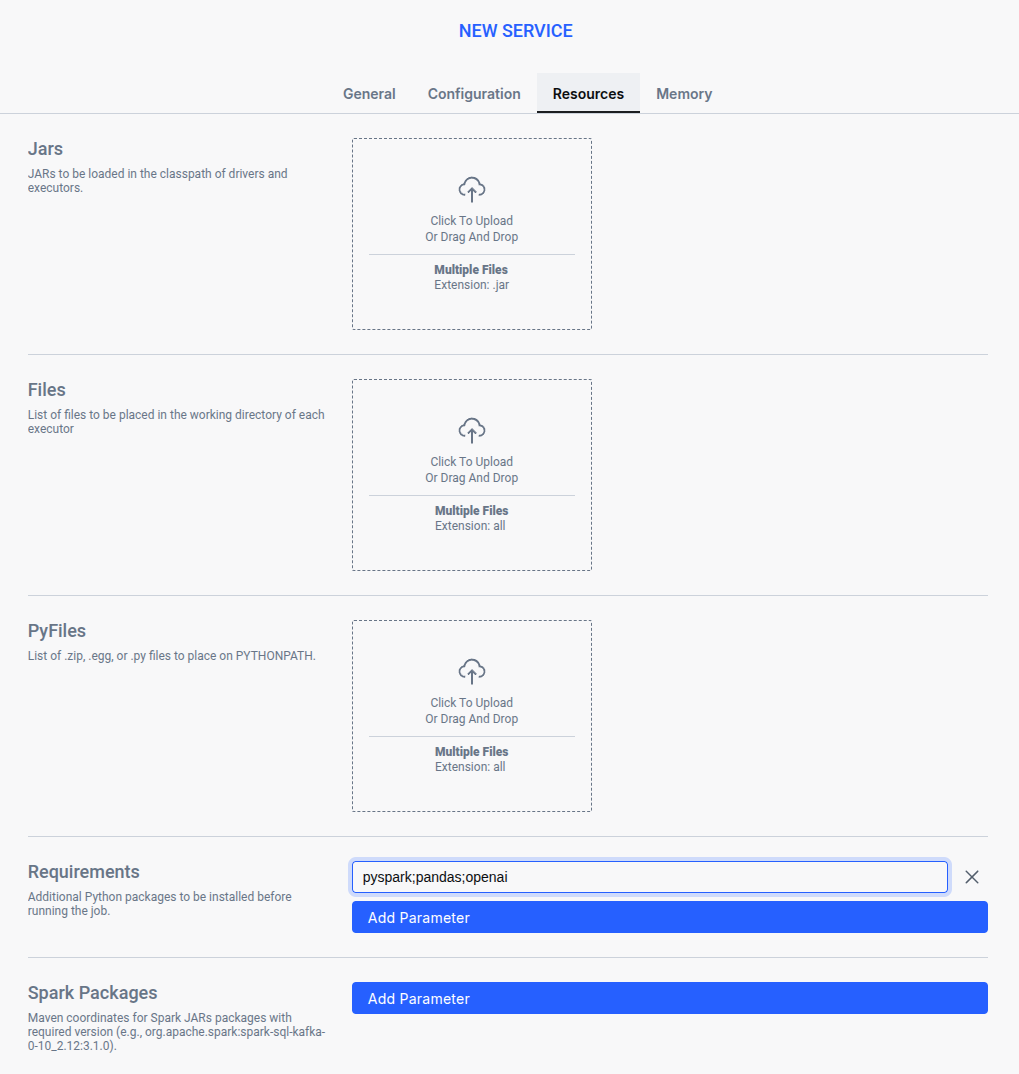

Ilum makes it easy to add Python dependencies when creating Spark Jobs or Interactive Services directly from the UI. The process is identical for both.

- Navigate to New Job or New Service in the Ilum UI (see Running Spark Jobs).

- Locate the Requirements field under the

Resourcestab. - Enter the required Python dependencies.

Ilum will install these dependencies at runtime before executing the application.

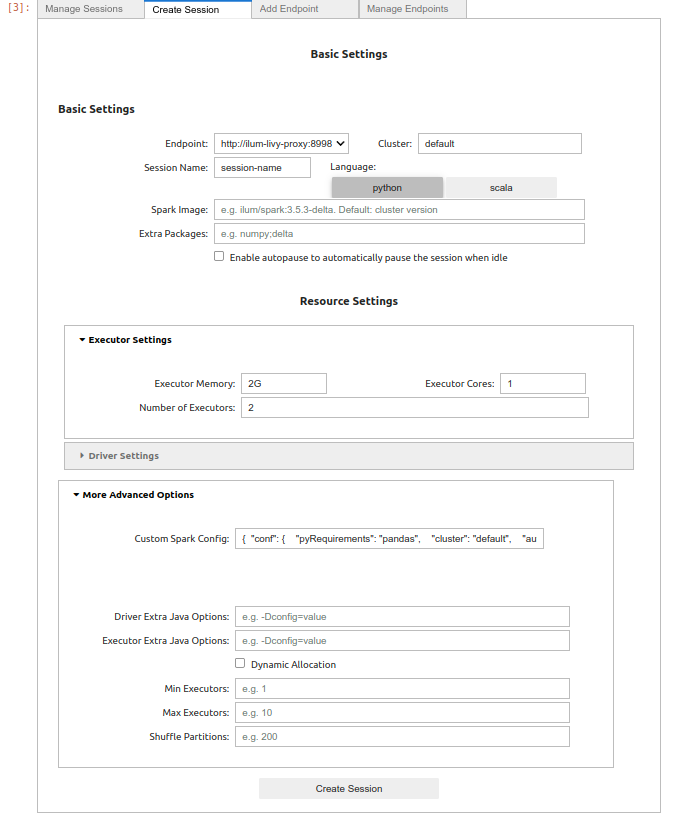

There are two ways to configure dependencies for Jupyter: per-session or globally for all sessions.

Option A: Per-Session (Session Creation Form)

When creating a Jupyter Notebook session, you can specify required Python packages directly in the session creation form.

- Open the Create Session form (e.g., via the

%manage_sparkmagic command). - Locate the Extra Packages field.

- Enter the required packages as a semicolon-separated list:

Extra Packages

pandas;numpy;openai - When the session starts, Ilum will automatically install these libraries.

Option B: Global Default for Jupyter (Helm/ConfigMap)

To define default packages for all Jupyter Spark sessions (but not standard Spark jobs):

Install Time:

--set ilum-jupyter.sparkmagic.config.sessionConfigs.conf='{"pyRequirements":"pandas;numpy;openai"}'

Post-Install (ConfigMap):

Modify the ilum-jupyter-config configMap:

data:

config.json: |

...

{

"session_configs": {

"conf": { "pyRequirements": "pandas;numpy;openai", ... }

}

}

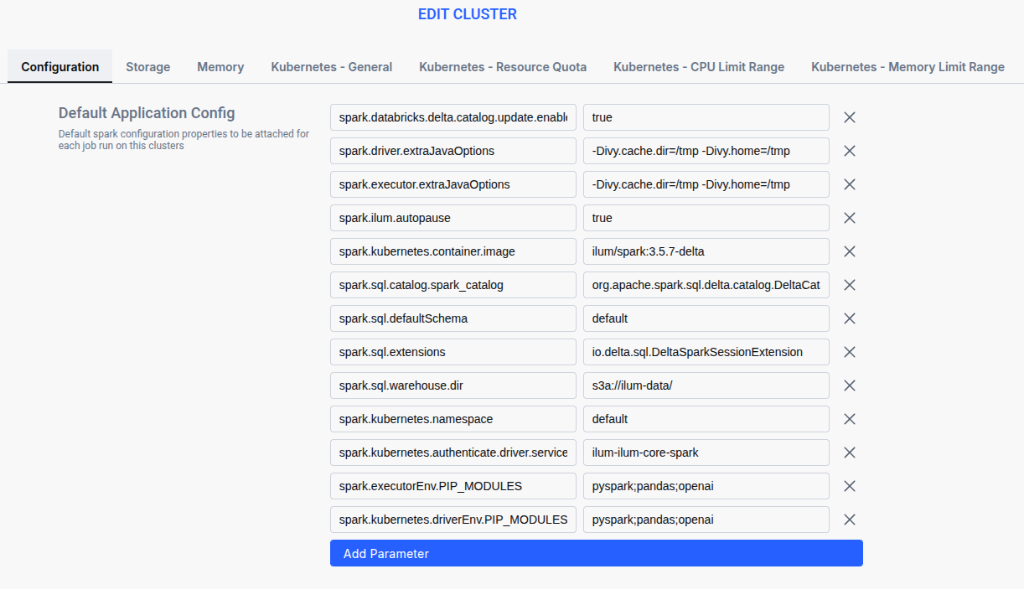

To define default python packages for ALL Spark applications running on a specific cluster (including Jobs and interactive sessions started by external modules like Jupyter or Airflow), you need to add environment variables in the Cluster Configuration.

You need to add two properties with the same list of semicolon-separated packages:

spark.executorEnv.PIP_MODULESspark.kubernetes.driverEnv.PIP_MODULES

Steps:

- Go to Clusters and edit your target cluster (or configured during creation).

- Navigate to the Configuration tab.

- Add the parameters:

Cluster Parameters

spark.executorEnv.PIP_MODULES=pyspark;pandas;openai

spark.kubernetes.driverEnv.PIP_MODULES=pyspark;pandas;openai

Each approach ensures your Spark jobs and Jupyter sessions have the necessary dependencies installed, so you can focus on data engineering and analysis instead of managing environments.

Best Practices

- Use this method for testing or proof-of-concept jobs; avoid it for production due to the overhead of downloading dependencies on each run.

- Specify exact versions for packages to ensure reproducibility.

- Combine this approach with custom Docker images for better consistency (e.g., use Docker for core dependencies and

spark.jars.packagesfor a few transient ones if needed). - Be mindful of network access and performance, as downloading packages can slow down startup times.

Troubleshooting

Common Dependency Issues

| Issue | Solution |

|---|---|

| JAR not found | Ensure the Maven coordinates (groupId, artifactId, version) are correct. |

| Startup Performance | If startup is slow or OOMs occur, consider baking dependencies into a Docker image. |

3. Installing Libraries in Jupyter Notebooks with pip install

For quick interactive experiments, you can install libraries within a Jupyter notebook using pip. This is a fast way to test something in an ad-hoc manner, but it is not recommended for anything beyond temporary exploration.

Example

If you are running a Spark session in an Ilum Jupyter notebook and need a new Python package, you can install it like so:

%%spark

import subprocess

# Install package

result = subprocess.check_output(["pip", "install", "geopandas"])

print(result.decode())

# Verify installation

result = subprocess.check_output(["pip", "list"])

print(result.decode())

This will install the package in the notebook’s environment so you can use it immediately.

Why It’s Not Recommended

- Packages installed this way are only available in the current spark session.

- The environment does not persist across session restarts or new sessions.

- It can lead to inconsistencies between your development environment and the production Spark runtime.

Best Practices

- Use this approach only for quick, throwaway prototyping.

- If you find yourself relying on a pip-installed library, add it to a requirements file or Docker image for permanence.

- Document any packages you had to install in the notebook so you can update your environment properly later.

Troubleshooting

Pip Install Issues

| Issue | Solution |

|---|---|

| Package not found | Check spelling and availability on PyPI. |

| Module not found | Try restarting the notebook kernel to reload the environment. |

Frequently Asked Questions (FAQ)

How do I install private Python packages in Spark?

You can install private packages by building a Custom Docker Image (Method 1). During the docker build process, you can pass credentials or use a pip configuration file to authenticate with your private PyPI repository. Alternatively, for runtime injection, you may need to configure a custom pip index URL in your environment, but Docker is more secure for handling credentials.

Should I use Docker or runtime requirements for Spark on Kubernetes?

For Production, always use a Docker image. It guarantees that every node (driver and executors) has the exact same environment without the latency and failure risk of installing packages at runtime. Use runtime requirements only for development, testing, or very small, non-critical libraries.

How to add JDBC drivers to Ilum Spark jobs?

JDBC drivers (like PostgreSQL, MySQL, or Snowflake) are best added as JARs. You can either:

- Add the JAR to your Docker image (e.g., in

$SPARK_HOME/jars). - Use

spark.jars.packages(Method 2) to fetch them from Maven Central at runtime (e.g.,org.postgresql:postgresql:42.6.0).

Final Recommendations

- Production workloads: Use a custom Docker image with all dependencies pre-installed. This yields a stable and reproducible environment with faster startup times.

- Testing or prototyping: Use

spark.jars.packagesand apyrequirements.txtfor flexibility. This allows you to experiment quickly without building a new image, though it may incur startup overhead. - Interactive experiments: Installing via Jupyter notebooks is convenient for short-lived experiments, but always transition to a more robust solution (Docker image or requirements file) for anything that needs to be saved or run again.

By following these practices, you can efficiently manage Spark dependencies in Ilum while minimizing compatibility issues and runtime errors.