Create a Local Ilum Cluster for Spark Development

A step-by-step guide on how to deploy a local development cluster in Ilum.

Why Create a Local Ilum Cluster?

Ilum enables multi-cluster architecture management from a single central control plane, automating complex configurations. You only need to register your cluster in Ilum and configure basic networking. To learn more about cluster management concepts in Ilum, read the architecture overview.

This guide covers the creation of a local cluster—a simulation environment launched inside the ilum-core server. This is ideal for testing Ilum's cluster management capabilities without requiring external Kubernetes infrastructure.

Demo

Here you can see a demo on how to add a local cluster to Ilum Guide in Full Screen

Step-by-Step Deployment Guide

Step 1: Navigate to Cluster Creation

- Go to the Clusters section in the Workload menu.

- Click on the New Cluster button.

Step 2: Configure General Settings

- Name & Description: Choose a descriptive name and description to help your team understand the cluster's purpose.

- Cluster Type: Set the cluster type to Local.

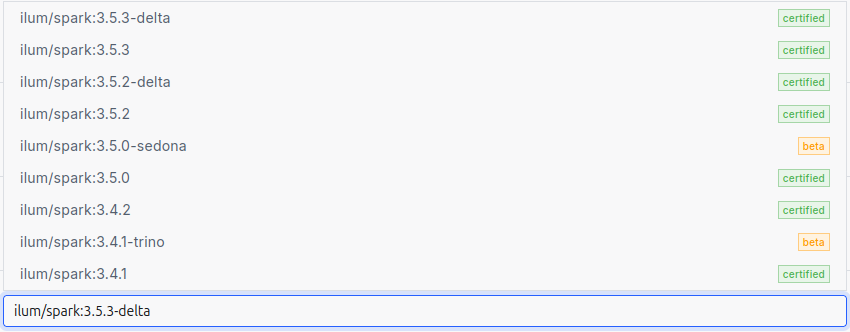

- Spark Version: Select the appropriate Spark image version.

The Spark version is defined by the container image used for Spark jobs. Ilum uses optimized images with pre-installed dependencies.

Here is a list of available Spark images:

Step 3: Define Spark Configurations

Any Spark configurations specified at the cluster level will be automatically applied to every job deployed on that cluster.

Why use this? It eliminates repetitive configuration. For instance, if you use Apache Iceberg as your Spark catalog, you can configure it once globally here. All Ilum jobs on this cluster will inherit these settings.

Step 4: Integrate Object Storage (S3/MinIO)

Ilum uses a default cluster storage to manage job files (both user code and Ilum internal artifacts). You can connect various storage providers: S3, GCS, WASBS, or HDFS.

In this guide, we will configure an S3 bucket using the MinIO storage that comes deployed by default with the Ilum cluster.

- Click on the "Add storage" button.

- Name & Type: Specify a name and select S3 as the storage type.

- Spark Bucket: Set to

ilum-files. This is the main bucket for Ilum Job files (default in the local MinIO). - Data Bucket: Set to

ilum-files. This is required for using Ilum Tables (Spark format). - Endpoint & Credentials:

- Endpoint:

ilum-minio:9000 - Access Key:

minioadmin - Secret Key:

minioadmin(These are the default credentials for the internal MinIO instance.)

- Endpoint:

- Click the "Submit" button.

You can add multiple storage backends; Ilum Jobs will be configured to interact with all of them.

Step 5: Finalize Local Cluster Resources

Navigate to the local cluster configurations. Here, use the slider to allocate the number of Java threads used for the local cluster simulation.

Finally, click the Submit button to create your cluster.

Step 6: Verify Deployment with a Test Job

To ensure your local cluster is functioning correctly, create and run a simple Spark job.

- Navigate to Cluster: Go to the Clusters section and select your new local cluster.

- Create Service: Click New Service. Enter a name and select Code as the type.

- Launch Editor: Find your new service in the list and click Execute.

- Run Spark Code: In the code panel, paste the following Scala code.

This script creates a simple DataFrame and writes it to the S3 storage we configured earlier.

// 1. Create sample data

val data = Seq(("Alice", 29), ("Bob", 35), ("Cathy", 23))

// 2. Convert to DataFrame with defined columns

val df = spark.createDataFrame(data).toDF("name", "age")

// 3. Define output path (pointing to our MinIO bucket)

val s3Path = "s3a://ilum-files/output"

// 4. Write data to S3 in CSV format

df.write.format("csv").mode("overwrite").save(s3Path)

- Click Execute.

If the job completes successfully, your local Ilum cluster is correctly configured and ready for development!