Nessie Catalog

Overview

Project Nessie is an open-source transactional data catalog that brings Git-like version control to data lakes. It enables you to manage multiple versions of your data using branches, tags, and commits, similar to how Git manages source code.

With Ilum’s integration, you can leverage Nessie’s version control features directly in your Spark environment. This allows you to branch, tag, and merge data changes safely and efficiently.

Unlike traditional Hive or Glue catalogs, which only track the latest state of each table, Nessie records all changes as commits in a timeline. Each commit represents a consistent snapshot of your data lake. Changes are isolated until committed, ensuring incomplete or in-progress updates are never visible to other users or jobs. Once finalized, changes become atomically visible, guaranteeing consistency.

Key Features: Nessie vs. Traditional Catalogs

| Feature | Traditional Catalogs | Nessie Catalog |

|---|---|---|

| Branching | No | Yes (Git-like) |

| Isolated Environments | Manual/Complex | Simple, via branches |

| Commit History & Time Travel | Limited/Per-table | Full catalog history |

| Multi-table Transactions | No | Yes (atomic commits) |

| Collaboration & Governance | Minimal | Built-in, audit log |

Highlights

- Branching: Create multiple isolated branches (e.g.,

main,dev,staging) without duplicating data. Branches are lightweight pointers to metadata snapshots. - Isolated Environments: Use the same data lake for dev, staging, and prod by isolating changes in branches. No need for separate catalogs or data copies.

- Commit History & Time Travel: Nessie maintains a unified commit log. Inspect, audit, or time-travel to any previous state by commit hash or timestamp.

- Atomic Multi-table Transactions: Commit changes across multiple tables as a single atomic operation. All succeed or none do.

- Collaboration & Governance: Work on separate branches, merge changes, and track who changed what and when. Enables safe experimentation and robust auditability.

Core Concepts

Branches

A branch is an independent line of development for your data catalog.

Branches start as copies of existing branches and track changes separately.

They are lightweight, referencing the same data files but different metadata.

The default branch is usually main.

Tags

A tag is a read-only label pointing to a specific commit.

Use tags to mark stable versions or important milestones (e.g., v1.0, 2025-06-release). Tags are immutable bookmarks.

Commits

A commit is a set of changes recorded as a single atomic unit. Each commit has a unique ID, timestamp, author, and optional message. The commit log provides full catalog versioning.

Using Nessie in Ilum

Nessie is not enabled by default in Ilum. To enable it, see the production page.

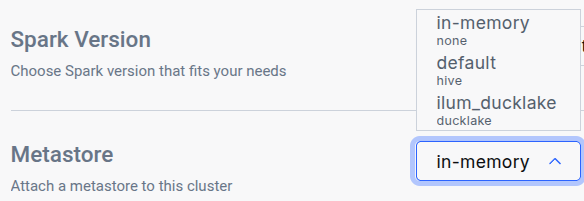

After enabling Nessie, navigate to the Edit Cluster tab for the cluster where you want to use the catalog and select it in the General metastore dropdown:

Ilum supports Project Nessie as a catalog for version-controlled data management. When using Ilum notebooks or Spark jobs with Apache Iceberg, you can perform Git-like operations (branching, merging, tagging) directly via SQL.

Nessie requires special configuration with Spark.

If used as the main Ilum catalog, the nessie_catalog should be pre-configured in your Spark session.

Additionally, make sure the Spark image used in your cluster has the Nessie client installed. In particular, you will need:

- Iceberg Spark Runtime (

org.apache.iceberg:iceberg-spark-runtime-<SPARK_VERSION>_<SCALA_VERSION>) - Required for Nessie support. - Iceberg AWS Bundle (

org.apache.iceberg:iceberg-aws-bundle) - Required for S3 support. - Nessie SQL Extensions (

org.projectnessie.nessie-integrations:nessie-spark-extensions-<SPARK_VERSION>_<SCALA_VERSION>) - Required for Nessie-specific SQL operations.

You can also use Ilum’s custom Spark image for Nessie: ilum/spark:<SPARK_VERION>-nessie, which includes all the required dependencies.

Ilum’s spark-nessie image does not include any Delta table dependencies, so you will need to remove the default cluster configuration for Delta tables if you use this image. In particular:

| Name | Value |

|---|---|

spark.sql.catalog.spark_catalog | org.apache.spark.sql.delta.catalog.DeltaCatalog |

spark.sql.extensions | io.delta.sql.DeltaSparkSessionExtension |

Additionally, in order for Nessie’s AWS S3 support to work, you need to pass the credentials to the Spark instance differently than with the Hive&Delta solution.

To pass the credentials, you can use the spark.driver.extraJavaOptions and spark.executor.extraJavaOptions Spark configuration options.

In particular, the following options should be appended:

-Daws.region=<REGION>- The default Minio region isus-east-1.-Daws.accessKeyId=<ACCESS_KEY_ID>- The default Minio access key isminioadmin.-Daws.secretAccessKey=<SECRET_ACCESS_KEY>- The default Minio secret key isminioadmin. For a more secure solution, the credentials should be built into the Spark image.

Nessie Walkthrough

In the beginning, it is recommended to create anything inside the main branch so that you avoid problems with merging into an empty branch:

CREATE TABLE nessie_catalog.users(

user_id INT,

user_name VARCHAR(20)

);

Create a Branch

CREATE BRANCH dev IN nessie_catalog FROM main;

And to verify everything, list all branches and tags:

LIST REFERENCES IN nessie_catalog;

Work on a Branch

Create a table and insert data in the dev branch with the fully qualified name (<TableName>@<BranchName> or <TableName>@<CommitHash>):

CREATE TABLE nessie_catalog.`sales@dev`(

sale_timestamp CHAR(10),

sale_amount INT,

payment_method VARCHAR(20)

);

INSERT INTO nessie_catalog.`sales@dev` VALUES

('2025-06-01', 1000, 'Online'),

('2025-06-02', 1500, 'InStore'),

('2025-06-03', 800, 'Online'),

('2025-06-04', 1200, 'Mobile'),

('2025-06-05', 950, 'InStore');

SELECT COUNT(*) FROM nessie_catalog.`sales@dev`;

Or use the USE statement to switch a context to a specific branch:

USE BRANCH dev IN nessie_catalog;

SELECT COUNT(*) FROM nessie_catalog.sales;

Because Ilum’s SQL executor treats each query as a stateless entity, using the USE statement requires executing all related statements together.

To do this, select the entire query block in the editor and then press execute.

And show the log of all commits done:

SHOW LOG ON dev IN nessie_catalog;

Merge Branches

MERGE BRANCH dev INTO main IN nessie_catalog;

SHOW TABLES IN nessie_catalog;

If you see an error of No common ancestor in parents of <X> and <Y>, this can mean that the branch you are trying to merge into is empty.

This will cause the merge to fail, even if the branch you are trying to merge was correctly created from the parent branch.

Best Practices

- Develop in Isolation: Use branches for development or experiments. Promote changes through a hierarchy (e.g., dev → staging → main).

- Merge Frequently: Merge changes regularly to minimize conflicts.

- Keep Branches Short-Lived: Remove feature branches after merging.

- Avoid Conflicts: Sync your branch with the latest target branch before merging.

- Tag Milestones: Use tags for stable releases or important checkpoints.

- Document Changes: Add commit messages for traceability.

Learn More

For advanced SQL operations and the full Nessie Spark SQL reference, see:

👉 Nessie Spark SQL Reference

Nessie with Ilum combines Spark’s power with Git-like data management, enabling robust “data as code” workflows for your lakehouse.